The Devil is in the Data: Learning Fair Graph Neural Networks via Partial Knowledge Distillation

Paper and Code

Nov 29, 2023

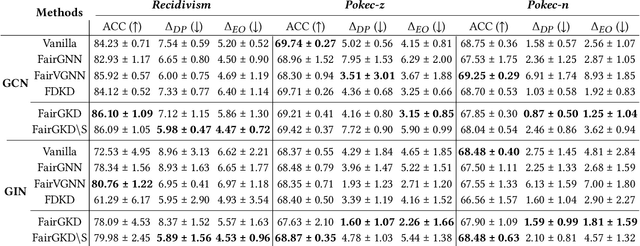

Graph neural networks (GNNs) are being increasingly used in many high-stakes tasks, and as a result, there is growing attention on their fairness recently. GNNs have been shown to be unfair as they tend to make discriminatory decisions toward certain demographic groups, divided by sensitive attributes such as gender and race. While recent works have been devoted to improving their fairness performance, they often require accessible demographic information. This greatly limits their applicability in real-world scenarios due to legal restrictions. To address this problem, we present a demographic-agnostic method to learn fair GNNs via knowledge distillation, namely FairGKD. Our work is motivated by the empirical observation that training GNNs on partial data (i.e., only node attributes or topology data) can improve their fairness, albeit at the cost of utility. To make a balanced trade-off between fairness and utility performance, we employ a set of fairness experts (i.e., GNNs trained on different partial data) to construct the synthetic teacher, which distills fairer and informative knowledge to guide the learning of the GNN student. Experiments on several benchmark datasets demonstrate that FairGKD, which does not require access to demographic information, significantly improves the fairness of GNNs by a large margin while maintaining their utility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge