The Curious Case of Convex Networks

Paper and Code

Jun 09, 2020

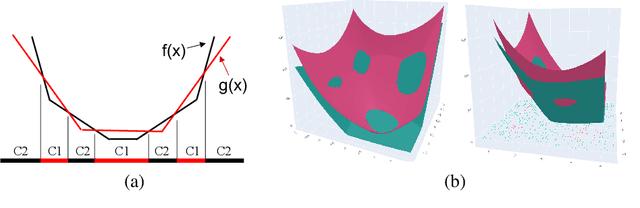

In this paper, we investigate a constrained formulation of neural networks where the output is a convex function of the input. We show that the convexity constraints can be enforced on both fully connected and convolutional layers, making them applicable to most architectures. The convexity constraints include restricting the weights (for all but the first layer) to be non-negative and using a non-decreasing convex activation function. Albeit simple, these constraints have profound implications on the generalization abilities of the network. We draw three valuable insights: (a) Input Output Convex Networks (IOC-NN) self regularize and almost uproot the problem of overfitting; (b) Although heavily constrained, they come close to the performance of the base architectures; and (c) The ensemble of convex networks can match or outperform the non convex counterparts. We demonstrate the efficacy of the proposed idea using thorough experiments and ablation studies on MNIST, CIFAR10, and CIFAR100 datasets with three different neural network architectures. The code for this project is publicly available at: \url{https://github.com/sarathsp1729/Convex-Networks}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge