The Costs and Benefits of Goal-Directed Attention in Deep Convolutional Neural Networks

Paper and Code

Feb 06, 2020

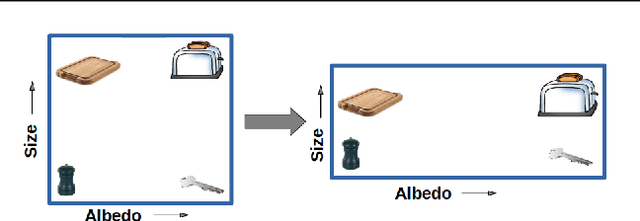

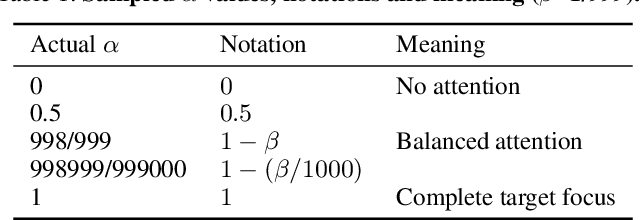

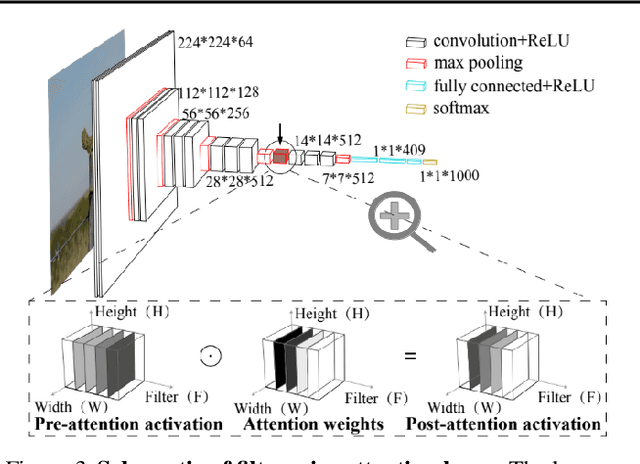

Attention in machine learning is largely bottom-up, whereas people also deploy top-down, goal-directed attention. Motivated by neuroscience research, we evaluated a plug-and-play, top-down attention layer that is easily added to existing deep convolutional neural networks (DCNNs). In object recognition tasks, increasing top-down attention has benefits (increasing hit rates) and costs (increasing false alarm rates). At a moderate level, attention improves sensitivity (i.e., increases $d^\prime$) at only a moderate increase in bias for tasks involving standard images, blended images, and natural adversarial images. These theoretical results suggest that top-down attention can effectively reconfigure general-purpose DCNNs to better suit the current task goal. We hope our results continue the fruitful dialog between neuroscience and machine learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge