Teaching on a Budget in Multi-Agent Deep Reinforcement Learning

Paper and Code

May 28, 2019

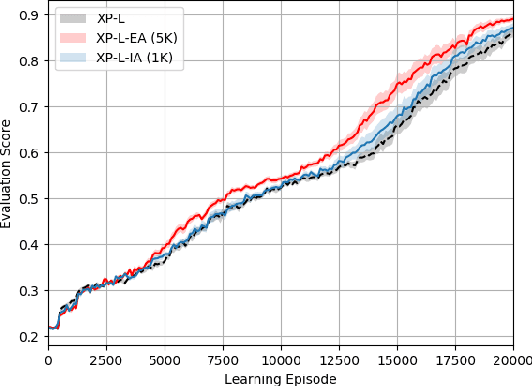

Deep Reinforcement Learning (RL) algorithms can solve complex sequential decision tasks successfully. However, they have a major drawback of having poor sample efficiency which can often be tackled by knowledge reuse. In Multi-Agent Reinforcement Learning (MARL) this drawback becomes worse, but at the same time, a new set of opportunities to leverage knowledge are also presented through agent interactions. One promising approach among these is peer-to-peer action advising through a teacher-student framework. Despite being introduced for single-agent RL originally, recent studies show that it can also be applied to multi-agent scenarios with promising empirical results. However, studies in this line of research are currently very limited. In this paper, we propose heuristics-based action advising techniques in cooperative decentralised MARL, using a nonlinear function approximation based task-level policy. By adopting Random Network Distillation technique, we devise a measurement for agents to assess their knowledge in any given state and be able to initiate the teacher-student dynamics with no prior role assumptions. Experimental results in a gridworld environment show that such an approach may indeed be useful and needs to be further investigated.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge