TACTO: A Fast, Flexible and Open-source Simulator for High-Resolution Vision-based Tactile Sensors

Paper and Code

Dec 15, 2020

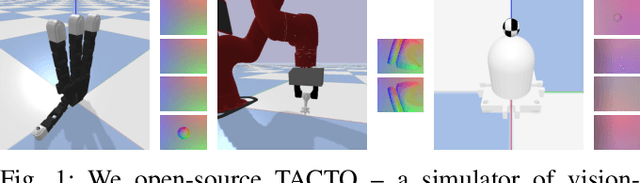

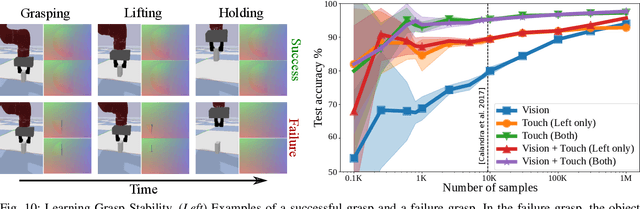

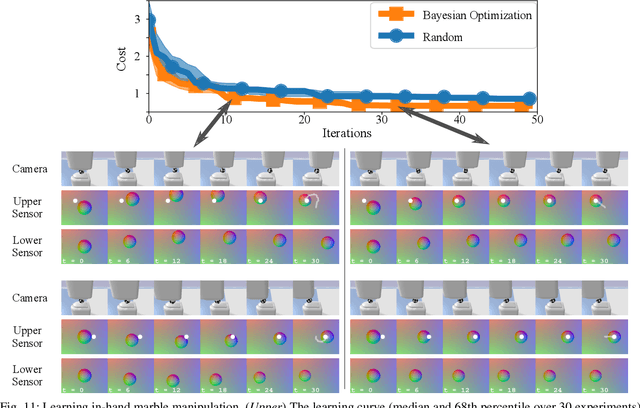

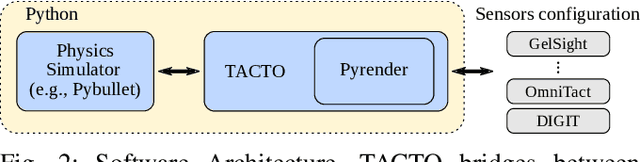

Simulators perform an important role in prototyping, debugging and benchmarking new advances in robotics and learning for control. Although many physics engines exist, some aspects of the real-world are harder than others to simulate. One of the aspects that have so far eluded accurate simulation is touch sensing. To address this gap, we present TACTO -- a fast, flexible and open-source simulator for vision-based tactile sensors. This simulator allows to render realistic high-resolution touch readings at hundreds of frames per second, and can be easily configured to simulate different vision-based tactile sensors, including GelSight, DIGIT and OmniTact. In this paper, we detail the principles that drove the implementation of TACTO and how they are reflected in its architecture. We demonstrate TACTO on a perceptual task, by learning to predict grasp stability using touch from 1 million grasps, and on a marble manipulation control task. We believe that TACTO is a step towards the widespread adoption of touch sensing in robotic applications, and to enable machine learning practitioners interested in multi-modal learning and control. TACTO is open-source at https://github.com/facebookresearch/tacto.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge