T-RECS: Training for Rate-Invariant Embeddings by Controlling Speed for Action Recognition

Paper and Code

Mar 23, 2018

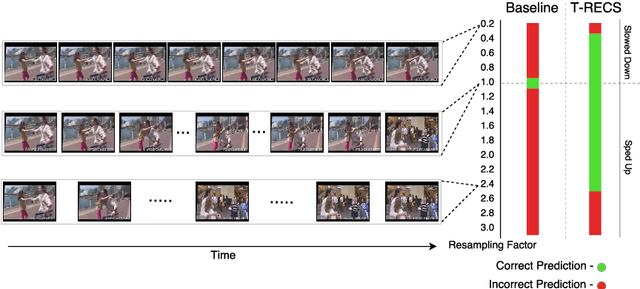

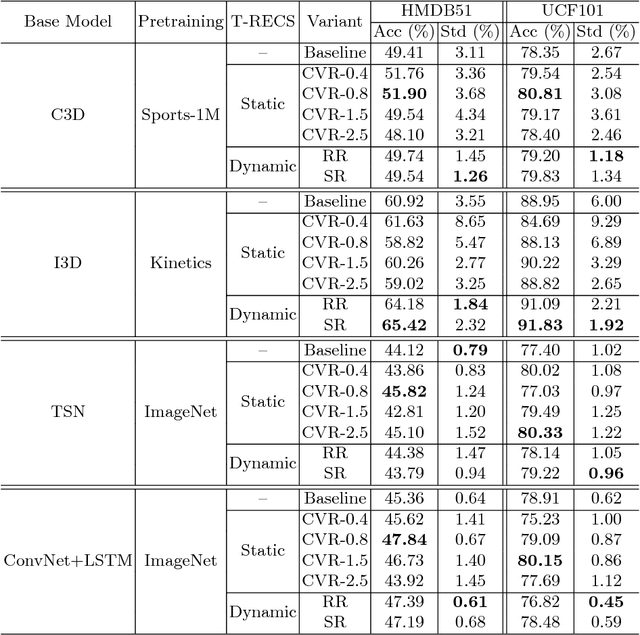

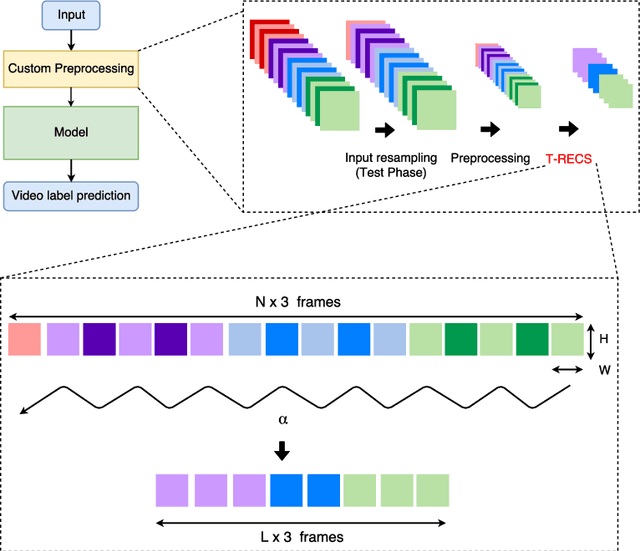

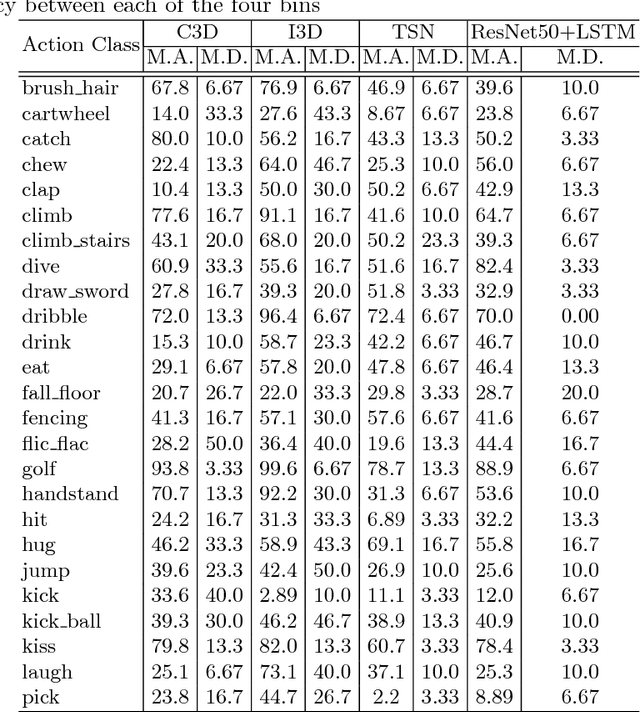

An action should remain identifiable when modifying its speed: consider the contrast between an expert chef and a novice chef each chopping an onion. Here, we expect the novice chef to have a relatively measured and slow approach to chopping when compared to the expert. In general, the speed at which actions are performed, whether slower or faster than average, should not dictate how they are recognized. We explore the erratic behavior caused by this phenomena on state-of-the-art deep network-based methods for action recognition in terms of maximum performance and stability in recognition accuracy across a range of input video speeds. By observing the trends in these metrics and summarizing them based on expected temporal behaviour w.r.t. variations in input video speeds, we find two distinct types of network architectures. In this paper, we propose a preprocessing method named T-RECS, as a way to extend deep-network-based methods for action recognition to explicitly account for speed variability in the data. We do so by adaptively resampling the inputs to a given model. T-RECS is agnostic to the specific deep-network model; we apply it to four state-of-the-art action recognition architectures, C3D, I3D, TSN, and ConvNet+LSTM. On HMDB51 and UCF101, T-RECS-based I3D models show a peak improvement of at least 2.9% in performance over the baseline while T-RECS-based C3D models achieve a maximum improvement in stability by 59% over the baseline, on the HMDB51 dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge