Supervised training of spiking neural networks for robust deployment on mixed-signal neuromorphic processors

Paper and Code

Feb 17, 2021

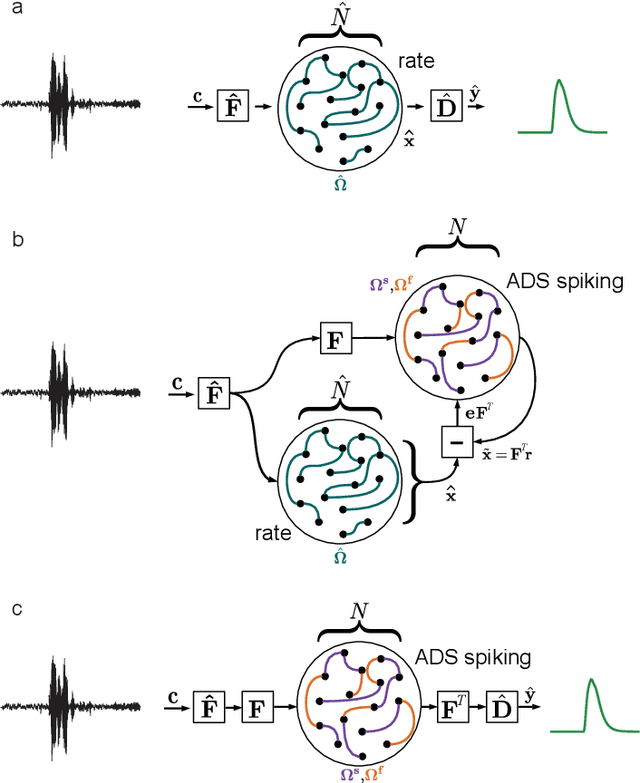

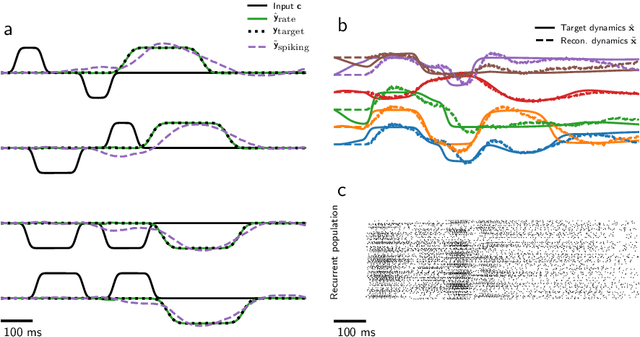

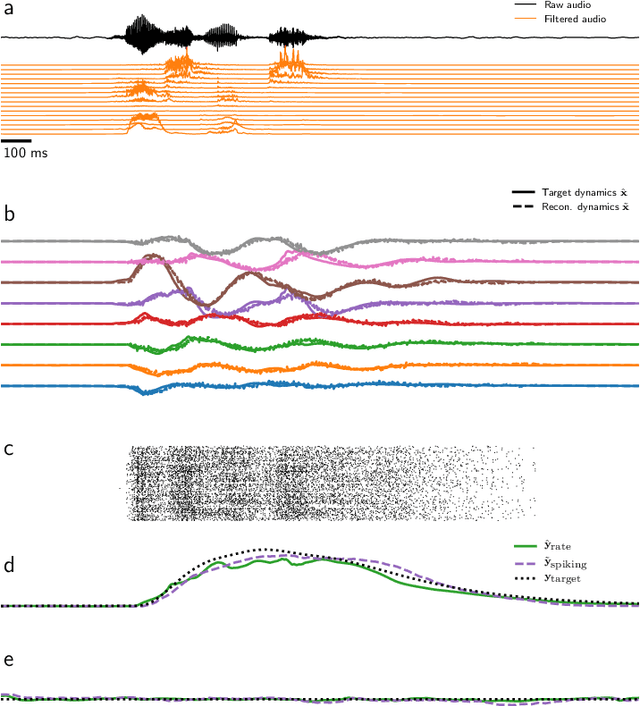

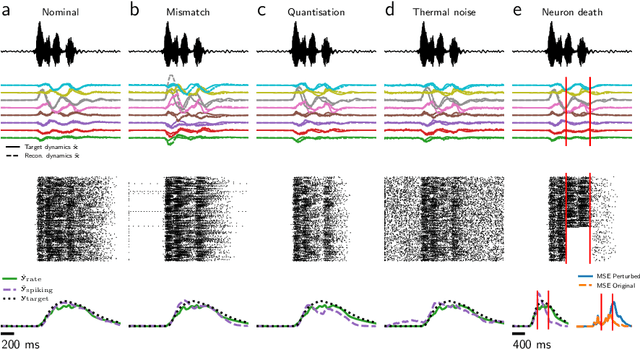

Mixed-signal analog/digital circuits can emulate spiking neurons and synapses with extremely high energy efficiency, an approach known as "neuromorphic engineering". However, analog circuits are sensitive to process-induced variation among transistors in a chip ("device mismatch"). For neuromorphic implementation of Spiking Neural Networks (SNNs), mismatch causes parameter variation between identically-configured neurons and synapses. Each chip therefore exhibits a different distribution of neural parameters, causing deployed networks to respond differently between chips. Current solutions to mitigate mismatch based on per-chip calibration or on-chip learning entail increased design complexity, area and cost, making deployment of neuromorphic devices expensive and difficult. Here we present a supervised learning approach that addresses this challenge by maximizing robustness to mismatch and other common sources of noise. Our method trains SNNs to perform temporal classification tasks by mimicking a pre-trained dynamical system, using a local learning rule adapted from non-linear control theory. We demonstrate our method on two tasks requiring memory, and measure the robustness of our approach to several forms of noise and mismatch. We show that our approach is more robust than several common alternatives for training SNNs. Our method provides a viable way to robustly deploy pre-trained networks on mixed-signal neuromorphic hardware, without requiring per-device training or calibration.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge