Supervised Learning of Labeled Pointcloud Differences via Cover-Tree Entropy Reduction

Paper and Code

Jan 19, 2018

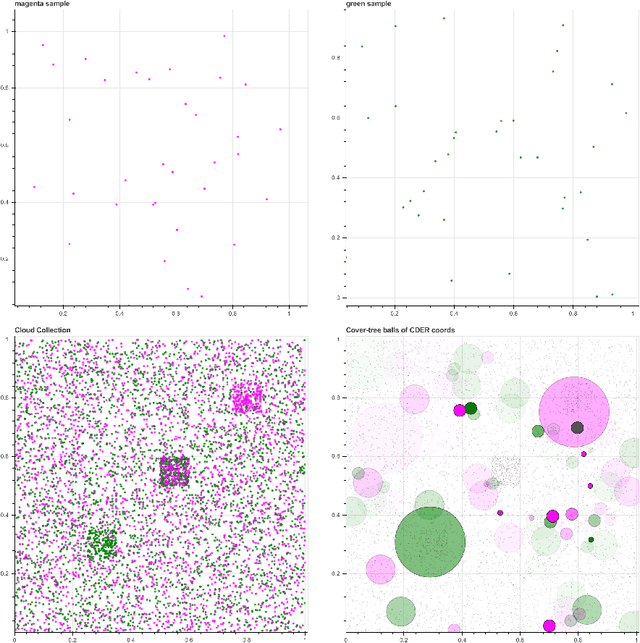

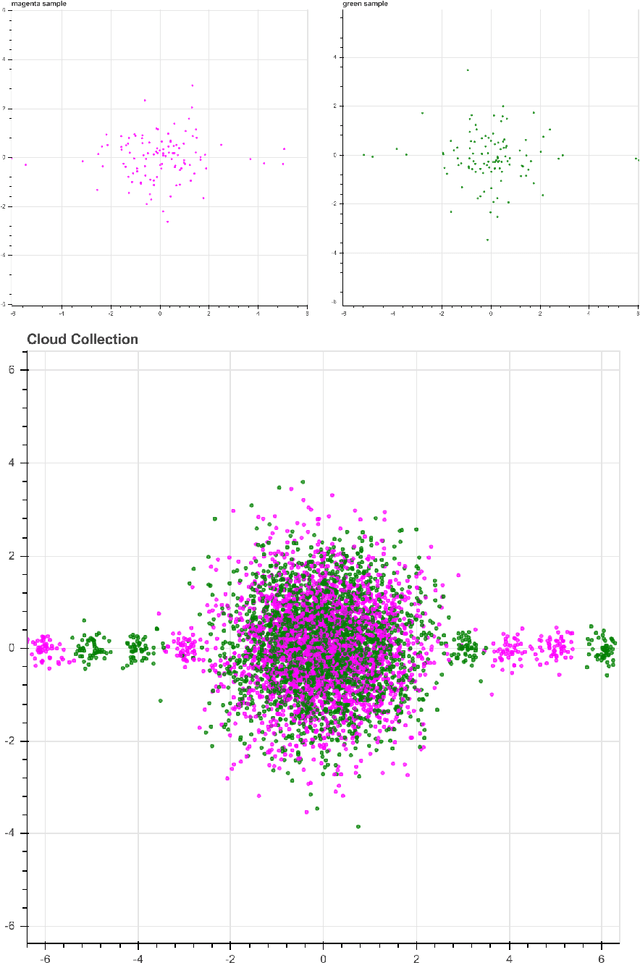

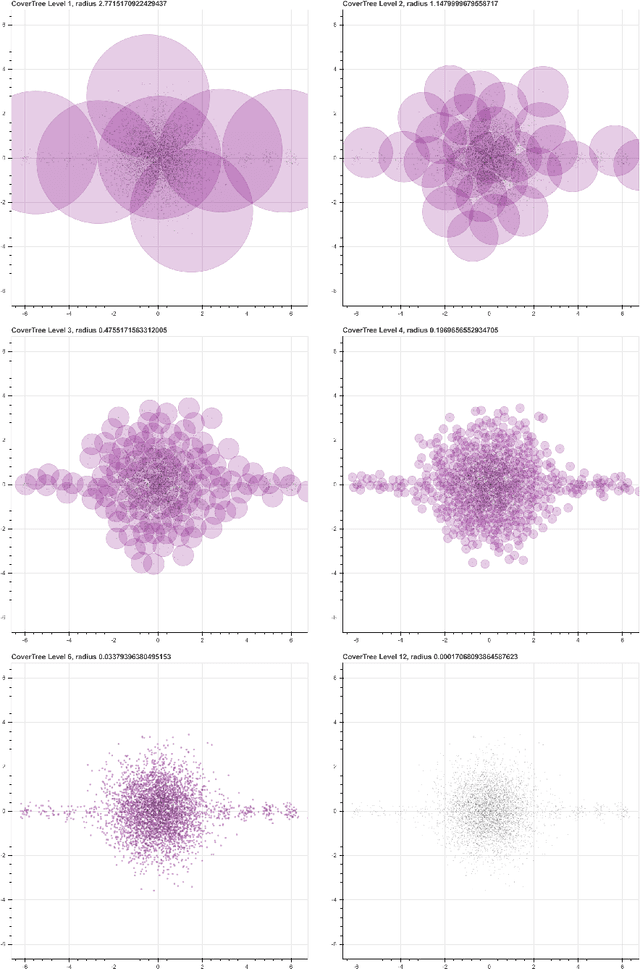

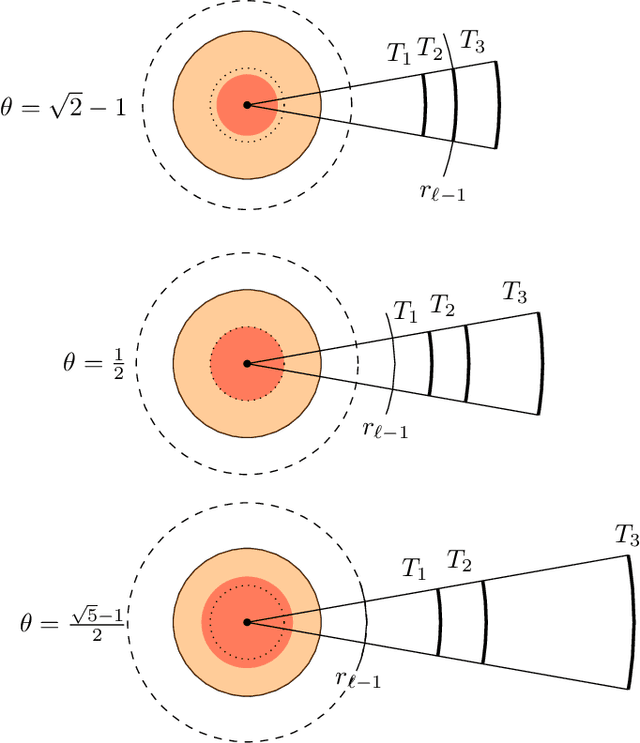

We introduce a new algorithm, called CDER, for supervised machine learning that merges the multi-scale geometric properties of Cover Trees with the information-theoretic properties of entropy. CDER applies to a training set of labeled pointclouds embedded in a common Euclidean space. If typical pointclouds corresponding to distinct labels tend to differ at any scale in any sub-region, CDER can identify these differences in (typically) linear time, creating a set of distributional coordinates which act as a feature extraction mechanism for supervised learning. We describe theoretical properties and implementation details of CDER, and illustrate its benefits on several synthetic examples.

* Distribution Statement A - Approved for public release, distribution

is unlimited. Version 2: added link to code, and some minor improvements.

Version 3: updated authors and thanks

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge