Structured Nonconvex and Nonsmooth Optimization: Algorithms and Iteration Complexity Analysis

Paper and Code

Jan 17, 2018

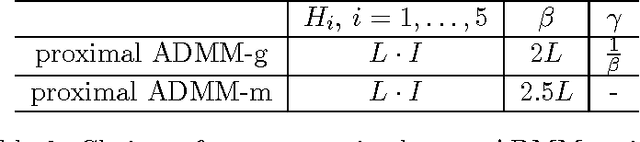

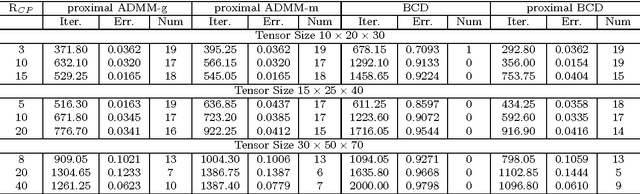

Nonconvex and nonsmooth optimization problems are frequently encountered in much of statistics, business, science and engineering, but they are not yet widely recognized as a technology in the sense of scalability. A reason for this relatively low degree of popularity is the lack of a well developed system of theory and algorithms to support the applications, as is the case for its convex counterpart. This paper aims to take one step in the direction of disciplined nonconvex and nonsmooth optimization. In particular, we consider in this paper some constrained nonconvex optimization models in block decision variables, with or without coupled affine constraints. In the case of without coupled constraints, we show a sublinear rate of convergence to an $\epsilon$-stationary solution in the form of variational inequality for a generalized conditional gradient method, where the convergence rate is shown to be dependent on the H\"olderian continuity of the gradient of the smooth part of the objective. For the model with coupled affine constraints, we introduce corresponding $\epsilon$-stationarity conditions, and apply two proximal-type variants of the ADMM to solve such a model, assuming the proximal ADMM updates can be implemented for all the block variables except for the last block, for which either a gradient step or a majorization-minimization step is implemented. We show an iteration complexity bound of $O(1/\epsilon^2)$ to reach an $\epsilon$-stationary solution for both algorithms. Moreover, we show that the same iteration complexity of a proximal BCD method follows immediately. Numerical results are provided to illustrate the efficacy of the proposed algorithms for tensor robust PCA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge