Structured Deep Neural Network Pruning by Varying Regularization Parameters

Paper and Code

Apr 25, 2018

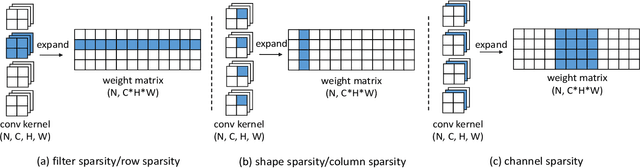

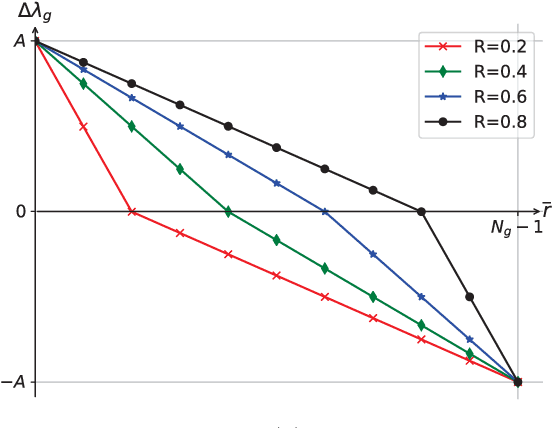

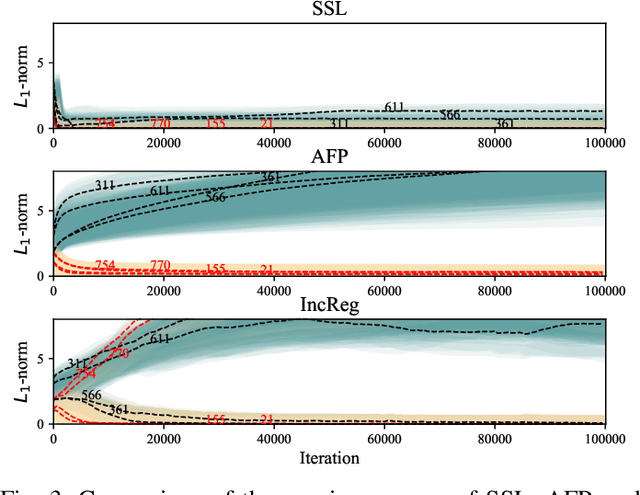

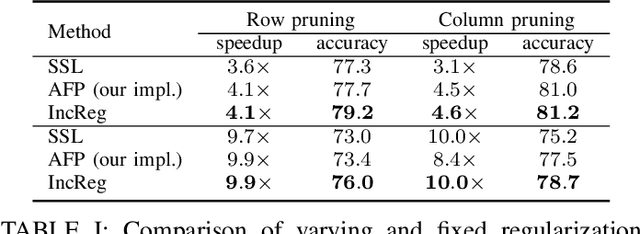

Convolutional Neural Networks (CNN's) are restricted by their massive computation and high storage. Parameter pruning is a promising approach for CNN compression and acceleration, which aims at eliminating redundant model parameters with tolerable performance loss. Despite its effectiveness, existing regularization-based parameter pruning methods usually assign a fixed regularization parameter to all weights, which neglects the fact that different weights may have different importance to CNN. To solve this problem, we propose a theoretically sound regularization-based pruning method to incrementally assign different regularization parameters to different weights based on their importance to the network. On AlexNet and VGG-16, our method can achieve 4x theoretical speedup with similar accuracies compared with the baselines. For ResNet-50, the proposed method also achieves 2x acceleration and only suffers 0.1% top-5 accuracy loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge