Structural and object detection for phosphene images

Paper and Code

Sep 26, 2018

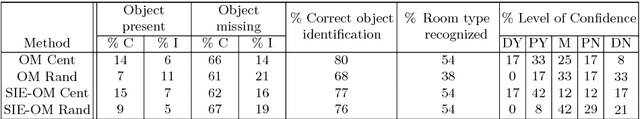

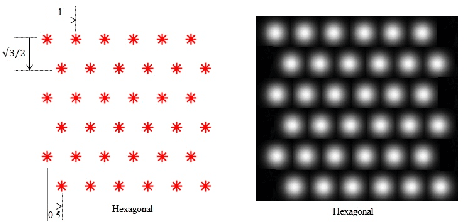

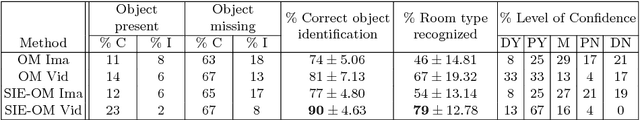

Prosthetic vision based on phosphenes is a promising way to provide visual perception to some blind people. However, phosphenic images are very limited in terms of spatial resolution (e.g.: 32 x 32 phosphene array) and luminance levels (e.g.: 8 gray levels), which results in the subject receiving very limited information about the scene. This requires using high-level processing to extract more information from the scene and present it to the subject with the phosphenes limitations. In this work, we study the recognition of indoor environments under simulated prosthetic vision. Most research in simulated prosthetic vision is performed based on static images, while very few researchers have addressed the problem of scene recognition through video sequences. We propose a new approach to build a schematic representation of indoor environments for phosphene images. Our schematic representation relies on two parallel CNNs for the extraction of structural informative edges of the room and the relevant object silhouettes based on mask segmentation. We have performed a study with twelve normally sighted subjects to evaluate how our methods were able to the room recognition by presenting phosphenic images and videos. We show how our method is able to increase the recognition ability of the user from 75% using alternative methods to 90% using our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge