Stochastic approximation with decision-dependent distributions: asymptotic normality and optimality

Paper and Code

Jul 09, 2022

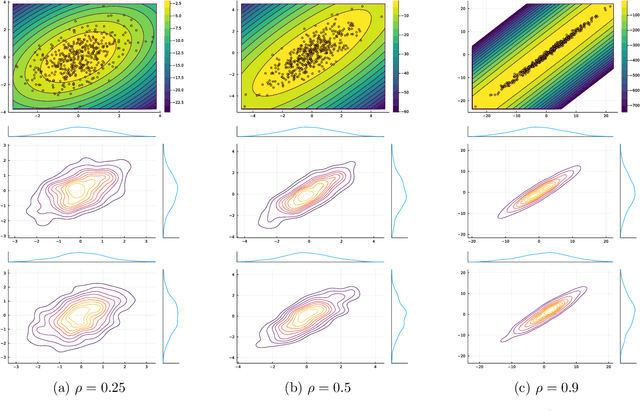

We analyze a stochastic approximation algorithm for decision-dependent problems, wherein the data distribution used by the algorithm evolves along the iterate sequence. The primary examples of such problems appear in performative prediction and its multiplayer extensions. We show that under mild assumptions, the deviation between the average iterate of the algorithm and the solution is asymptotically normal, with a covariance that nicely decouples the effects of the gradient noise and the distributional shift. Moreover, building on the work of H\'ajek and Le Cam, we show that the asymptotic performance of the algorithm is locally minimax optimal.

* 35 pages, 1 figure

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge