Stacked U-Nets with Self-Assisted Priors Towards Robust Correction of Rigid Motion Artifact in Brain MRI

Paper and Code

Nov 11, 2021

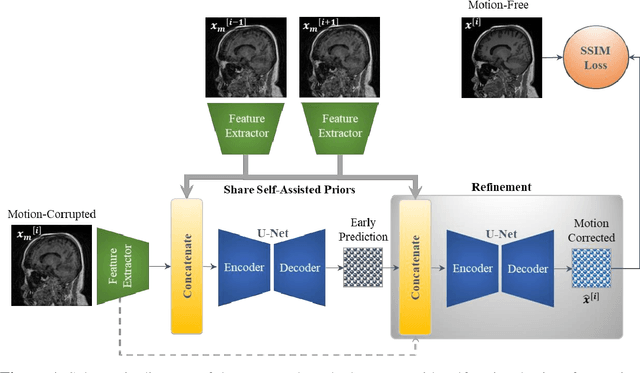

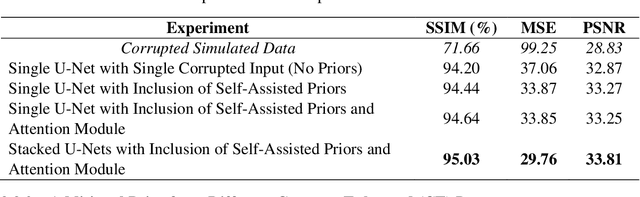

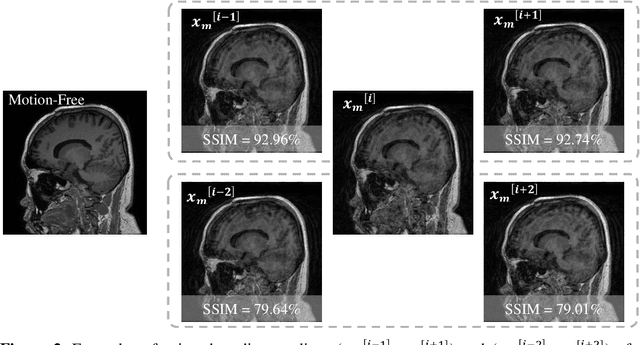

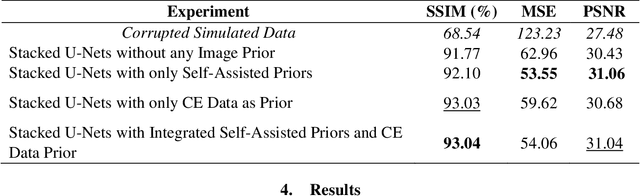

In this paper, we develop an efficient retrospective deep learning method called stacked U-Nets with self-assisted priors to address the problem of rigid motion artifacts in MRI. The proposed work exploits the usage of additional knowledge priors from the corrupted images themselves without the need for additional contrast data. The proposed network learns missed structural details through sharing auxiliary information from the contiguous slices of the same distorted subject. We further design a refinement stacked U-Nets that facilitates preserving of the image spatial details and hence improves the pixel-to-pixel dependency. To perform network training, simulation of MRI motion artifacts is inevitable. We present an intensive analysis using various types of image priors: the proposed self-assisted priors and priors from other image contrast of the same subject. The experimental analysis proves the effectiveness and feasibility of our self-assisted priors since it does not require any further data scans.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge