Stacked BNAS: Rethinking Broad Convolutional Neural Network for Neural Architecture Search

Paper and Code

Nov 15, 2021

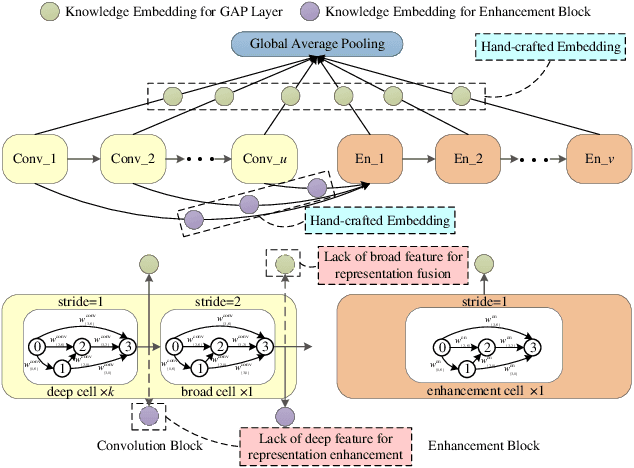

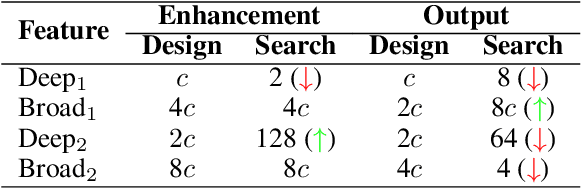

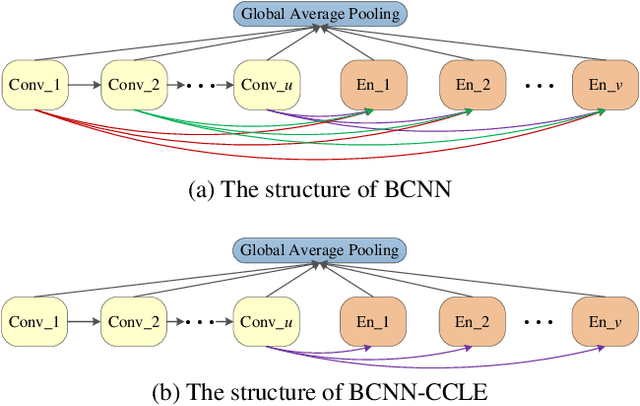

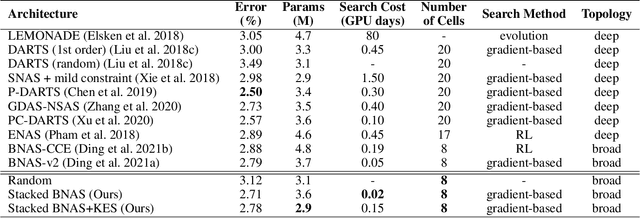

Different from other deep scalable architecture based NAS approaches, Broad Neural Architecture Search (BNAS) proposes a broad one which consists of convolution and enhancement blocks, dubbed Broad Convolutional Neural Network (BCNN) as search space for amazing efficiency improvement. BCNN reuses the topologies of cells in convolution block, so that BNAS can employ few cells for efficient search. Moreover, multi-scale feature fusion and knowledge embedding are proposed to improve the performance of BCNN with shallow topology. However, BNAS suffers some drawbacks: 1) insufficient representation diversity for feature fusion and enhancement, and 2) time consuming of knowledge embedding design by human expert. In this paper, we propose Stacked BNAS whose search space is a developed broad scalable architecture named Stacked BCNN, with better performance than BNAS. On the one hand, Stacked BCNN treats mini-BCNN as the basic block to preserve comprehensive representation and deliver powerful feature extraction ability. On the other hand, we propose Knowledge Embedding Search (KES) to learn appropriate knowledge embeddings. Experimental results show that 1) Stacked BNAS obtains better performance than BNAS, 2) KES contributes to reduce the parameters of learned architecture with satisfactory performance, and 3) Stacked BNAS delivers state-of-the-art efficiency of 0.02 GPU days.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge