Stability-Guaranteed Reinforcement Learning for Contact-rich Manipulation

Paper and Code

Apr 22, 2020

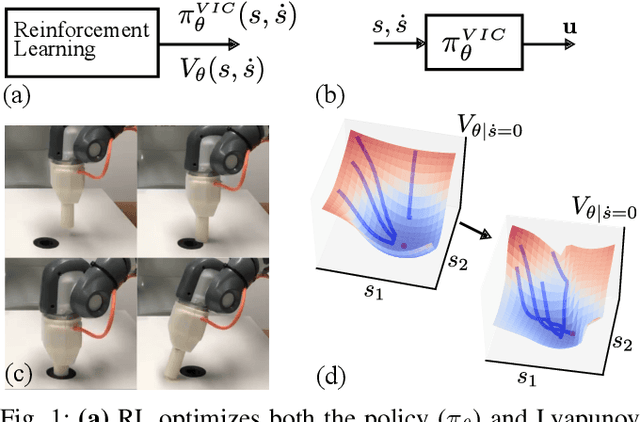

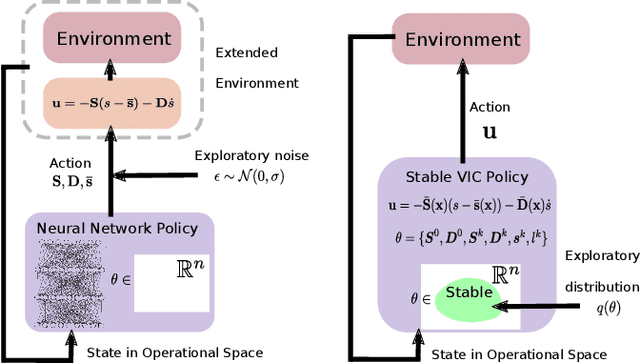

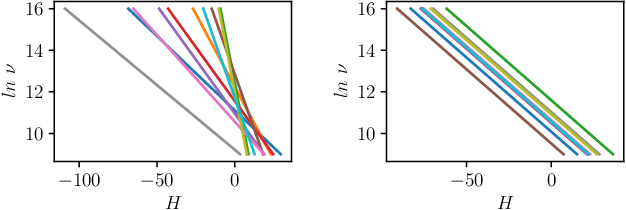

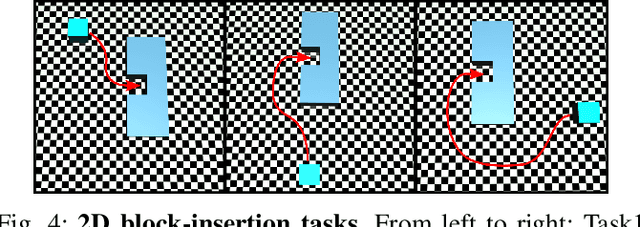

Reinforcement learning (RL) has had its fair share of success in contact-rich manipulation tasks but it still lags behind in benefiting from advances in robot control theory such as impedance control and stability guarantees. Recently, the concept of variable impedance control (VIC) was adopted into RL with encouraging results. However, the more important issue of stability remains unaddressed. To clarify the challenge in stable RL, we introduce the term all-the-time-stability that unambiguously means that every possible rollout will be stability certified. Our contribution is a model-free RL method that not only adopts VIC but also achieves all-the-time-stability. Building on a recently proposed stable VIC controller as the policy parameterization, we introduce a novel policy search algorithm that is inspired by Cross-Entropy Method and inherently guarantees stability. As a part of our extensive experimental studies, we report, to the best of our knowledge, the first successful application of RL with all-the-time-stability on the benchmark problem of peg-in-hole.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge