SRIB Submission to Interspeech 2021 DiCOVA Challenge

Paper and Code

Jun 15, 2021

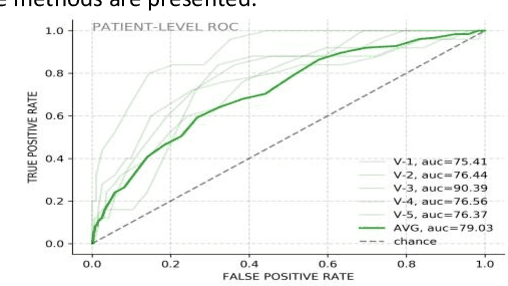

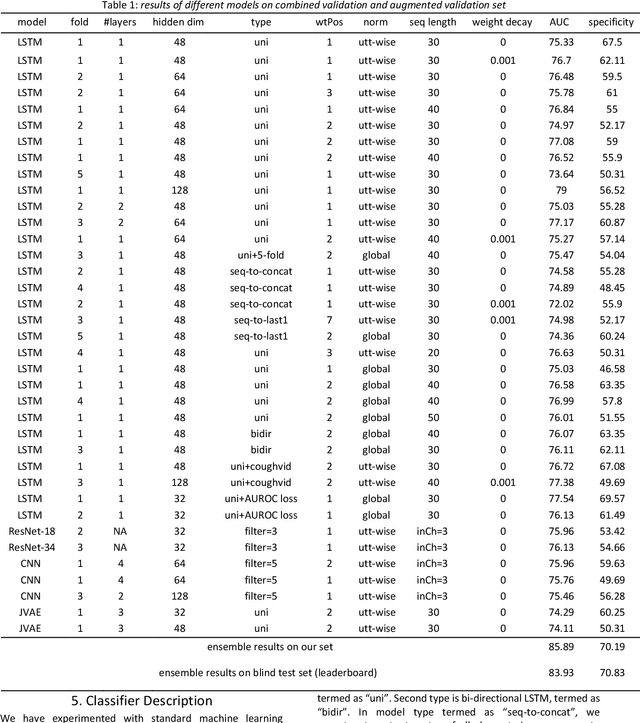

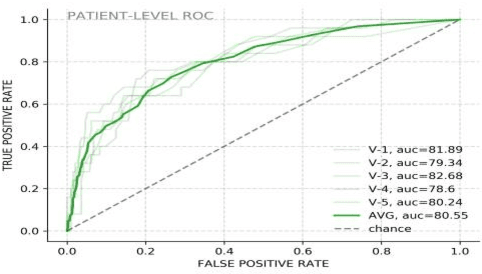

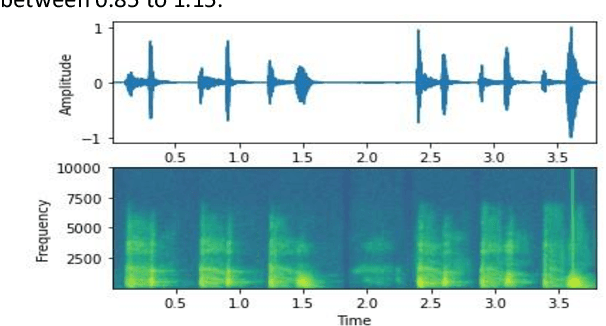

The COVID-19 pandemic has resulted in more than 125 million infections and more than 2.7 million casualties. In this paper, we attempt to classify covid vs non-covid cough sounds using signal processing and deep learning methods. Air turbulence, the vibration of tissues, movement of fluid through airways, opening, and closure of glottis are some of the causes for the production of the acoustic sound signals during cough. Does the COVID-19 alter the acoustic characteristics of breath, cough, and speech sounds produced through the respiratory system? This is an open question waiting for answers. In this paper, we incorporated novel data augmentation methods for cough sound augmentation and multiple deep neural network architectures and methods along with handcrafted features. Our proposed system gives 14% absolute improvement in area under the curve (AUC). The proposed system is developed as part of Interspeech 2021 special sessions and challenges viz. diagnosing of COVID-19 using acoustics (DiCOVA). Our proposed method secured the 5th position on the leaderboard among 29 participants.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge