Spatial Language Understanding for Object Search in Partially Observed Cityscale Environments

Paper and Code

Dec 04, 2020

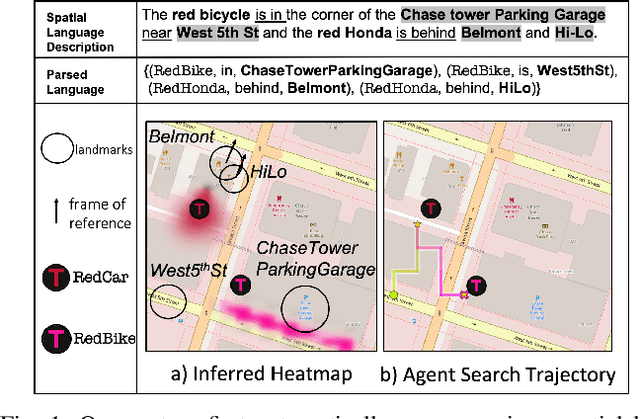

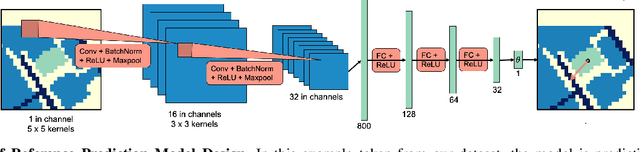

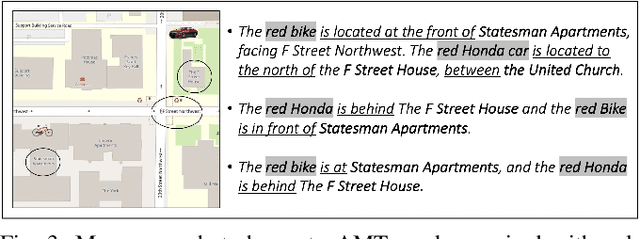

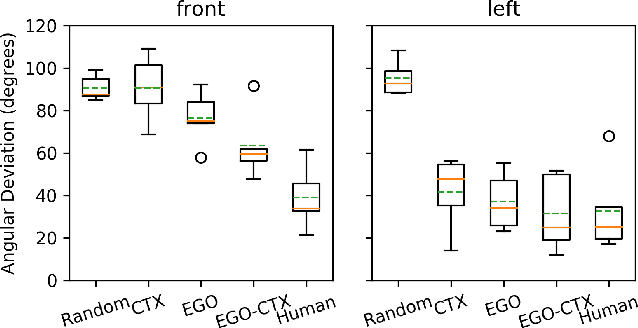

We present a system that enables robots to interpret spatial language as a distribution over object locations for effective search in partially observable cityscale environments. We introduce the spatial language observation space and formulate a stochastic observation model under the framework of Partially Observable Markov Decision Process (POMDP) which incorporates information extracted from the spatial language into the robot's belief. To interpret ambiguous, context-dependent prepositions (e.g.~front), we propose a convolutional neural network model that learns to predict the language provider's relative frame of reference (FoR) given environment context. We demonstrate the generalizability of our FoR prediction model and object search system through cross-validation over areas of five cities, each with a 40,000m$^2$ footprint. End-to-end experiments in simulation show that our system achieves faster search and higher success rate compared to a keyword-based baseline without spatial preposition understanding.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge