Sparse Logistic Regression Learns All Discrete Pairwise Graphical Models

Paper and Code

Oct 28, 2018

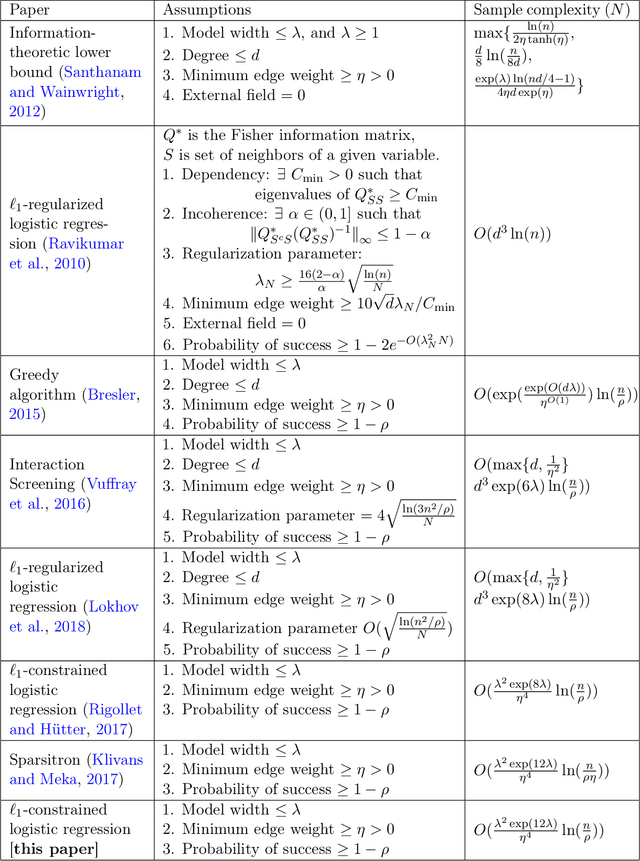

We characterize the effectiveness of a natural and classic algorithm for recovering the Markov graph of a general discrete pairwise graphical model from i.i.d. samples. The algorithm is (appropriately regularized) conditional maximum likelihood, which involves solving a convex program for each node; for Ising models this is $\ell_1$-constrained logistic regression, while for more alphabets an $\ell_{2,1}$ group-norm constraint needs to be used. We show that this algorithm can recover any arbitrary discrete pairwise graphical model, and also characterize its sample complexity as a function of model width, alphabet size, edge parameter accuracy, and the number of variables. We show that along every one of these axes, it matches or improves on all existing results and algorithms for this problem. Our analysis applies a sharp generalization error bound for logistic regression when the weight vector has an $\ell_1$ constraint (or $\ell_{2,1}$ constraint) and the sample vector has an $\ell_{\infty}$ constraint (or $\ell_{2, \infty}$ constraint). We also show that the proposed convex programs can be efficiently optimized in $\tilde{O}(n^2)$ running time (where $n$ is the number of variables) under the same statistical guarantee. Our experimental results verify our analysis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge