Small Drone Field Experiment: Data Collection & Processing

Paper and Code

Nov 29, 2017

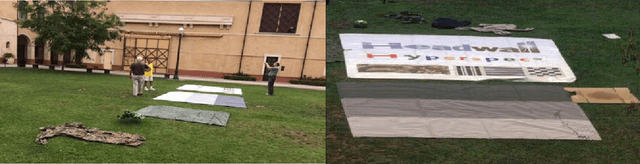

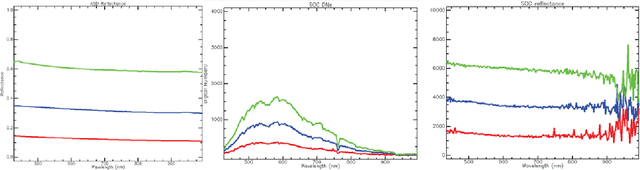

Following an initiative formalized in April 2016 formally known as ARL West between the U.S. Army Research Laboratory (ARL) and University of Southern California's Institute for Creative Technologies (USC ICT), a field experiment was coordinated and executed in the summer of 2016 by ARL, USC ICT, and Headwall Photonics. The purpose was to image part of the USC main campus in Los Angeles, USA, using two portable COTS (commercial off the shelf) aerial drone solutions for data acquisition, for photogrammetry (3D reconstruction from images), and fusion of hyperspectral data with the recovered set of 3D point clouds representing the target area. The research aims for determining the viability of having a machine capable of segmenting the target area into key material classes (e.g., manmade structures, live vegetation, water) for use in multiple purposes, to include providing the user with a more accurate scene understanding and enabling the unsupervised automatic sampling of meaningful material classes from the target area for adaptive semi-supervised machine learning. In the latter, a target set library may be used for automatic machine training with data of local material classes, as an example, to increase the prediction chances of machines recognizing targets. The field experiment and associated data post processing approach to correct for reflectance, geo-rectify, recover the area's dense point clouds from images, register spectral with elevation properties of scene surfaces from the independently collected datasets, and generate the desired scene segmented maps are discussed. Lessons learned from the experience are also highlighted throughout the paper.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge