SLOGAN: Handwriting Style Synthesis for Arbitrary-Length and Out-of-Vocabulary Text

Paper and Code

Feb 23, 2022

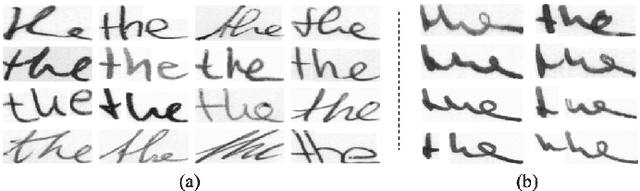

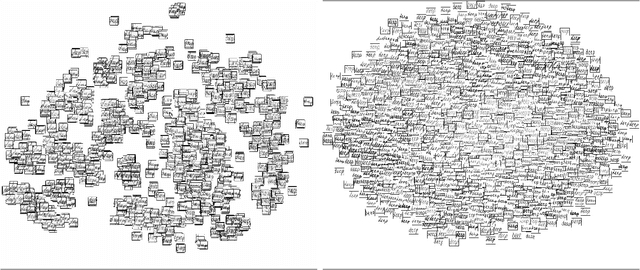

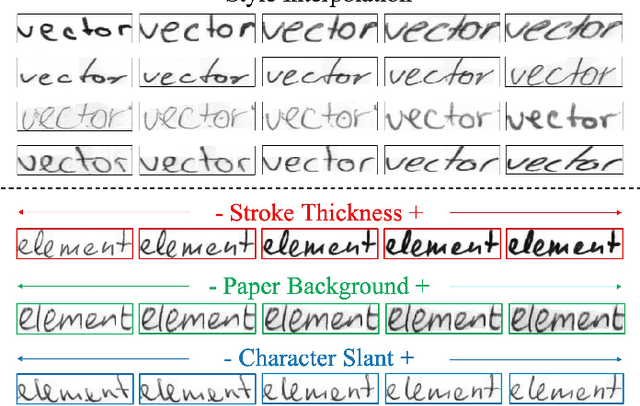

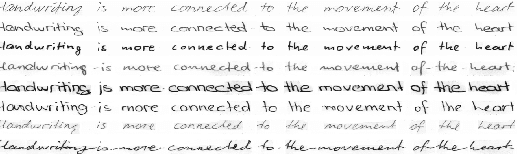

Large amounts of labeled data are urgently required for the training of robust text recognizers. However, collecting handwriting data of diverse styles, along with an immense lexicon, is considerably expensive. Although data synthesis is a promising way to relieve data hunger, two key issues of handwriting synthesis, namely, style representation and content embedding, remain unsolved. To this end, we propose a novel method that can synthesize parameterized and controllable handwriting Styles for arbitrary-Length and Out-of-vocabulary text based on a Generative Adversarial Network (GAN), termed SLOGAN. Specifically, we propose a style bank to parameterize the specific handwriting styles as latent vectors, which are input to a generator as style priors to achieve the corresponding handwritten styles. The training of the style bank requires only the writer identification of the source images, rather than attribute annotations. Moreover, we embed the text content by providing an easily obtainable printed style image, so that the diversity of the content can be flexibly achieved by changing the input printed image. Finally, the generator is guided by dual discriminators to handle both the handwriting characteristics that appear as separated characters and in a series of cursive joins. Our method can synthesize words that are not included in the training vocabulary and with various new styles. Extensive experiments have shown that high-quality text images with great style diversity and rich vocabulary can be synthesized using our method, thereby enhancing the robustness of the recognizer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge