Shepherding Hordes of Markov Chains

Paper and Code

Mar 26, 2019

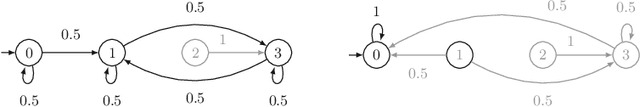

This paper considers large families of Markov chains (MCs) that are defined over a set of parameters with finite discrete domains. Such families occur in software product lines, planning under partial observability, and sketching of probabilistic programs. Simple questions, like `does at least one family member satisfy a property?', are NP-hard. We tackle two problems: distinguish family members that satisfy a given quantitative property from those that do not, and determine a family member that satisfies the property optimally, i.e., with the highest probability or reward. We show that combining two well-known techniques, MDP model checking and abstraction refinement, mitigates the computational complexity. Experiments on a broad set of benchmarks show that in many situations, our approach is able to handle families of millions of MCs, providing superior scalability compared to existing solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge