Search What You Want: Barrier Panelty NAS for Mixed Precision Quantization

Paper and Code

Jul 20, 2020

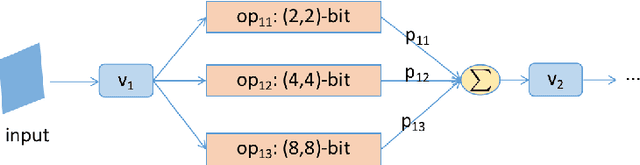

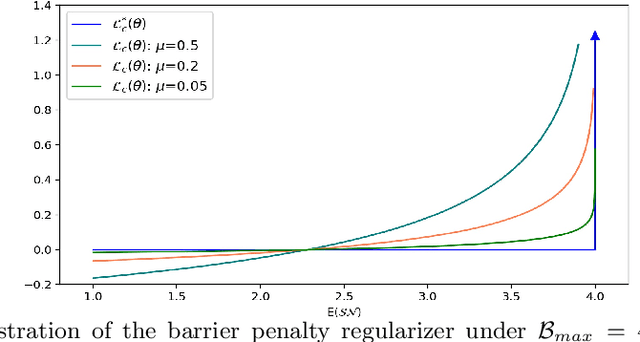

Emergent hardwares can support mixed precision CNN models inference that assign different bitwidths for different layers. Learning to find an optimal mixed precision model that can preserve accuracy and satisfy the specific constraints on model size and computation is extremely challenge due to the difficult in training a mixed precision model and the huge space of all possible bit quantizations. In this paper, we propose a novel soft Barrier Penalty based NAS (BP-NAS) for mixed precision quantization, which ensures all the searched models are inside the valid domain defined by the complexity constraint, thus could return an optimal model under the given constraint by conducting search only one time. The proposed soft Barrier Penalty is differentiable and can impose very large losses to those models outside the valid domain while almost no punishment for models inside the valid domain, thus constraining the search only in the feasible domain. In addition, a differentiable Prob-1 regularizer is proposed to ensure learning with NAS is reasonable. A distribution reshaping training strategy is also used to make training more stable. BP-NAS sets new state of the arts on both classification (Cifar-10, ImageNet) and detection (COCO), surpassing all the efficient mixed precision methods designed manually and automatically. Particularly, BP-NAS achieves higher mAP (up to 2.7\% mAP improvement) together with lower bit computation cost compared with the existing best mixed precision model on COCO detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge