Search Efficient Binary Network Embedding

Paper and Code

Jan 14, 2019

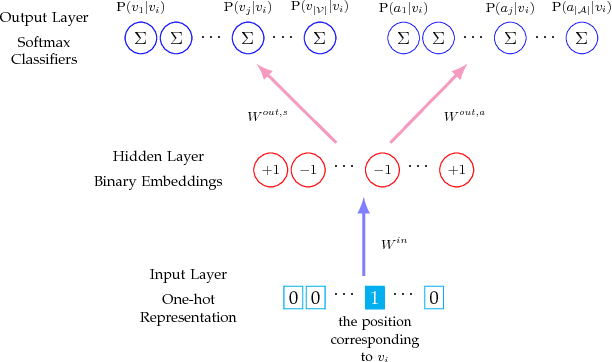

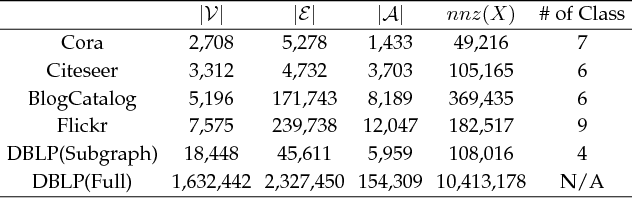

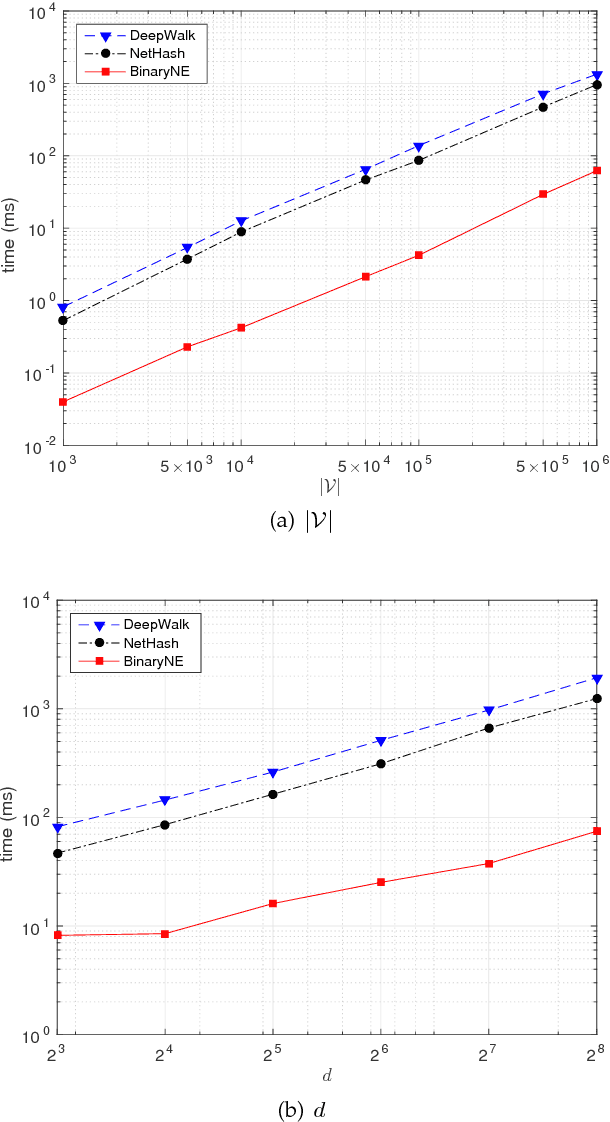

Traditional network embedding primarily focuses on learning a dense vector representation for each node, which encodes network structure and/or node content information, such that off-the-shelf machine learning algorithms can be easily applied to the vector-format node representations for network analysis. However, the learned dense vector representations are inefficient for large-scale similarity search, which requires to find the nearest neighbor measured by Euclidean distance in a continuous vector space. In this paper, we propose a search efficient binary network embedding algorithm called BinaryNE to learn a sparse binary code for each node, by simultaneously modeling node context relations and node attribute relations through a three-layer neural network. BinaryNE learns binary node representations efficiently through a stochastic gradient descent based online learning algorithm. The learned binary encoding not only reduces memory usage to represent each node, but also allows fast bit-wise comparisons to support much quicker network node search compared to Euclidean distance or other distance measures. Our experiments and comparisons show that BinaryNE not only delivers more than 23 times faster search speed, but also provides comparable or better search quality than traditional continuous vector based network embedding methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge