Scaling Up Graph Propagation Computation on Large Graphs: A Local Chebyshev Approximation Approach

Paper and Code

Dec 14, 2024

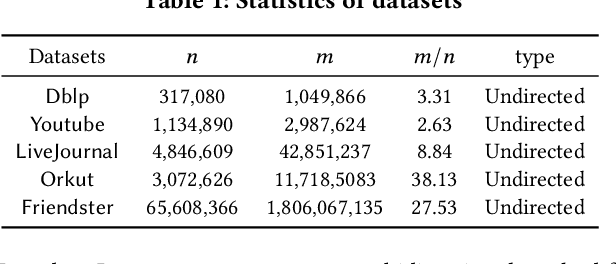

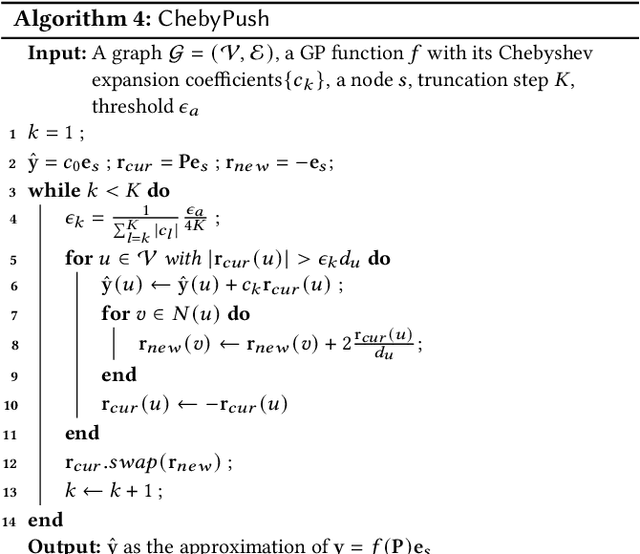

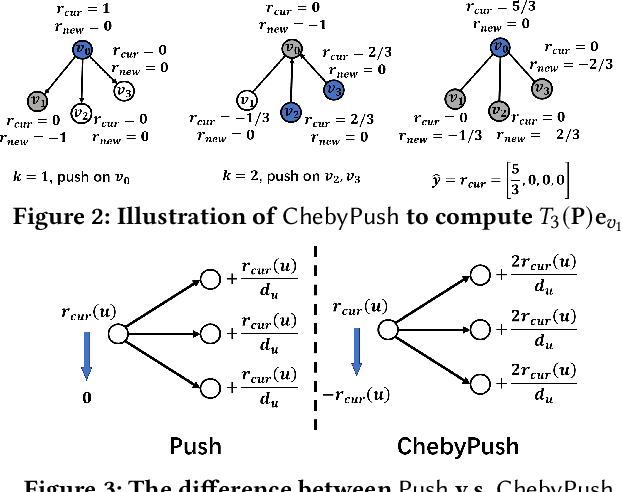

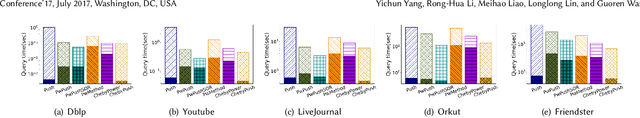

Graph propagation (GP) computation plays a crucial role in graph data analysis, supporting various applications such as graph node similarity queries, graph node ranking, graph clustering, and graph neural networks. Existing methods, mainly relying on power iteration or push computation frameworks, often face challenges with slow convergence rates when applied to large-scale graphs. To address this issue, we propose a novel and powerful approach that accelerates power iteration and push methods using Chebyshev polynomials. Specifically, we first present a novel Chebyshev expansion formula for general GP functions, offering a new perspective on GP computation and achieving accelerated convergence. Building on these theoretical insights, we develop a novel Chebyshev power iteration method (\ltwocheb) and a novel Chebyshev push method (\chebpush). Our \ltwocheb method demonstrates an approximate acceleration of $O(\sqrt{N})$ compared to existing power iteration techniques for both personalized PageRank and heat kernel PageRank computations, which are well-studied GP problems. For \chebpush, we propose an innovative subset Chebyshev recurrence technique, enabling the design of a push-style local algorithm with provable error guarantee and reduced time complexity compared to existing push methods. We conduct extensive experiments using 5 large real-world datasets to evaluate our proposed algorithms, demonstrating their superior efficiency compared to state-of-the-art approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge