Scalable Modeling of Spatiotemporal Data using the Variational Autoencoder: an Application in Glaucoma

Paper and Code

Aug 24, 2019

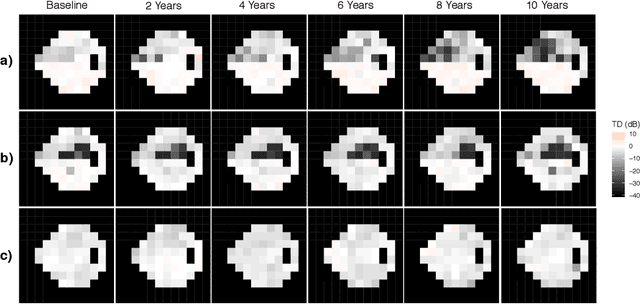

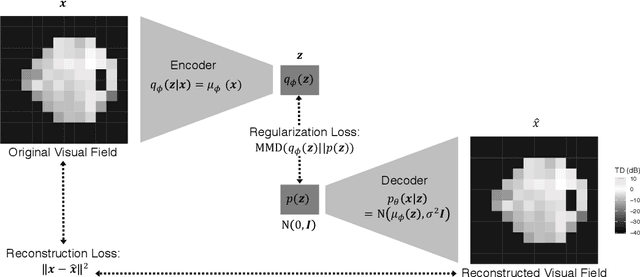

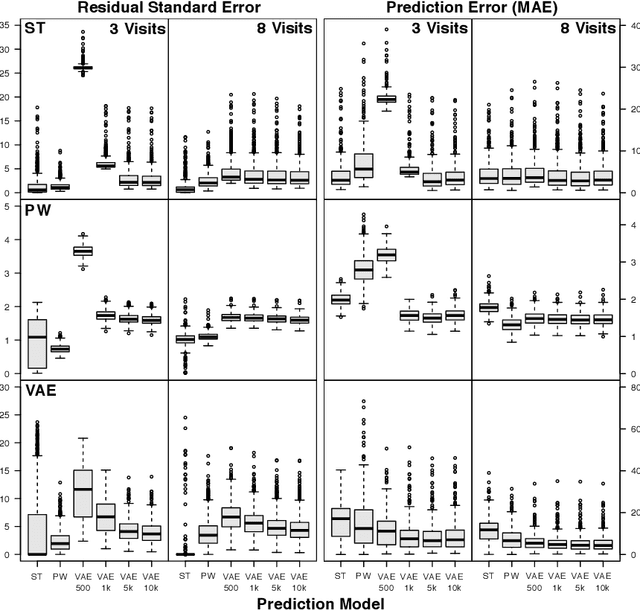

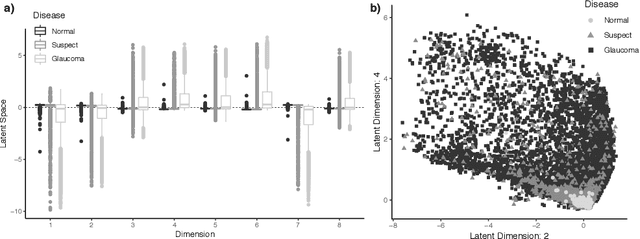

As big spatial data becomes increasingly prevalent, classical spatiotemporal (ST) methods often do not scale well. While methods have been developed to account for high-dimensional spatial objects, the setting where there are exceedingly large samples of spatial observations has had less attention. The variational autoencoder (VAE), an unsupervised generative model based on deep learning and approximate Bayesian inference, fills this void using a latent variable specification that is inferred jointly across the large number of samples. In this manuscript, we compare the performance of the VAE with a more classical ST method when analyzing longitudinal visual fields from a large cohort of patients in a prospective glaucoma study. Through simulation and a case study, we demonstrate that the VAE is a scalable method for analyzing ST data, when the goal is to obtain accurate predictions. R code to implement the VAE can be found on GitHub: https://github.com/berchuck/vaeST.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge