Salient Skin Lesion Segmentation via Dilated Scale-Wise Feature Fusion Network

Paper and Code

May 20, 2022

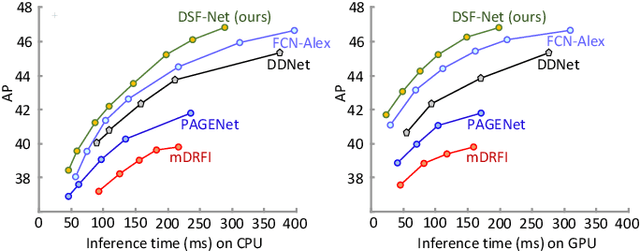

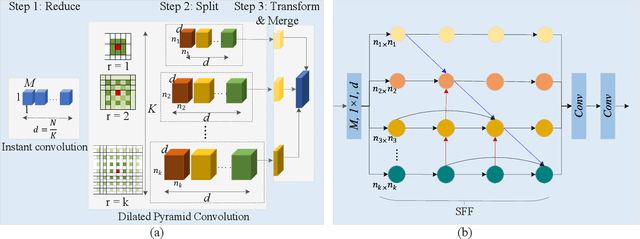

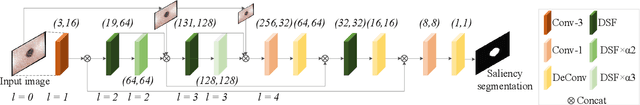

Skin lesion detection in dermoscopic images is essential in the accurate and early diagnosis of skin cancer by a computerized apparatus. Current skin lesion segmentation approaches show poor performance in challenging circumstances such as indistinct lesion boundaries, low contrast between the lesion and the surrounding area, or heterogeneous background that causes over/under segmentation of the skin lesion. To accurately recognize the lesion from the neighboring regions, we propose a dilated scale-wise feature fusion network based on convolution factorization. Our network is designed to simultaneously extract features at different scales which are systematically fused for better detection. The proposed model has satisfactory accuracy and efficiency. Various experiments for lesion segmentation are performed along with comparisons with the state-of-the-art models. Our proposed model consistently showcases state-of-the-art results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge