Safe-by-Repair: A Convex Optimization Approach for Repairing Unsafe Two-Level Lattice Neural Network Controllers

Paper and Code

Apr 06, 2021

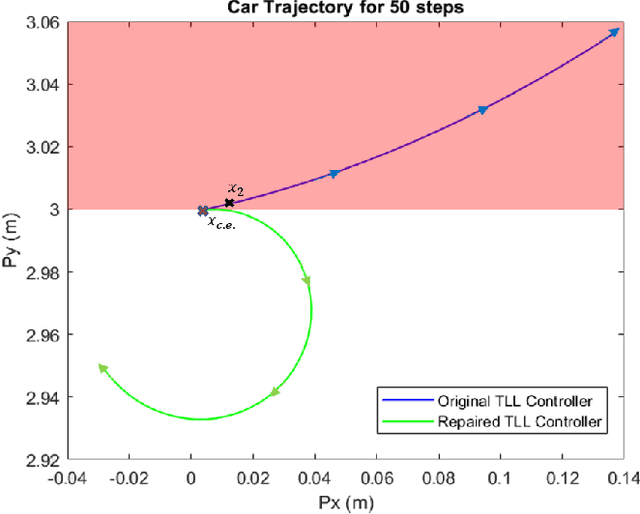

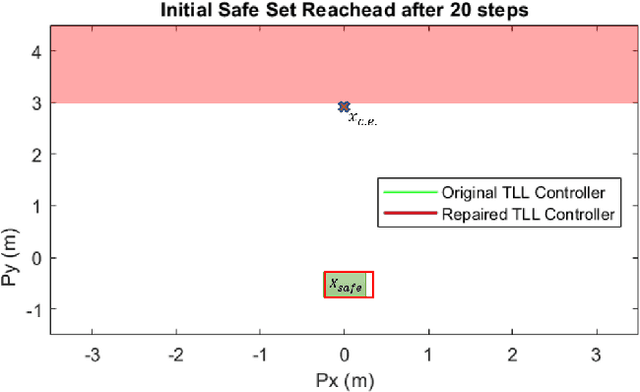

In this paper, we consider the problem of repairing a data-trained Rectified Linear Unit (ReLU) Neural Network (NN) controller for a discrete-time, input-affine system. That is we assume that such a NN controller is available, and we seek to repair unsafe closed-loop behavior at one known "counterexample" state while simultaneously preserving a notion of safe closed-loop behavior on a separate, verified set of states. To this end, we further assume that the NN controller has a Two-Level Lattice (TLL) architecture, and exhibit an algorithm that can systematically and efficiently repair such an network. Facilitated by this choice, our approach uses the unique semantics of the TLL architecture to divide the repair problem into two significantly decoupled sub-problems, one of which is concerned with repairing the un-safe counterexample -- and hence is essentially of local scope -- and the other of which ensures that the repairs are realized in the output of the network -- and hence is essentially of global scope. We then show that one set of sufficient conditions for solving each these sub-problems can be cast as a convex feasibility problem, and this allows us to formulate the TLL repair problem as two separate, but significantly decoupled, convex optimization problems. Finally, we evaluate our algorithm on a TLL controller on a simple dynamical model of a four-wheel-car.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge