SAFA: a Semi-Asynchronous Protocol for Fast Federated Learning with Low Overhead

Paper and Code

Oct 03, 2019

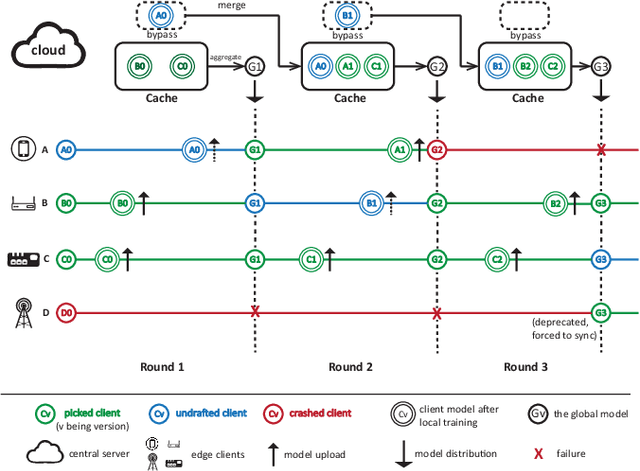

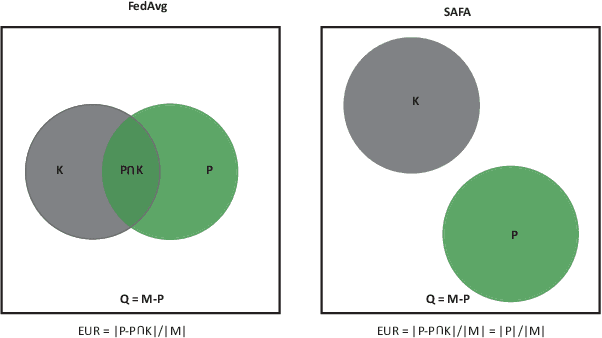

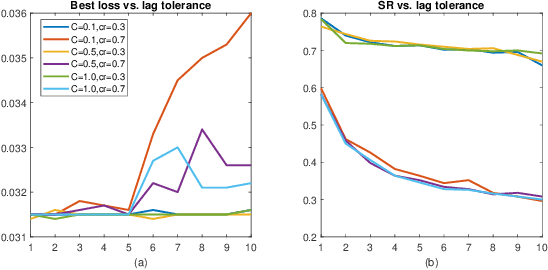

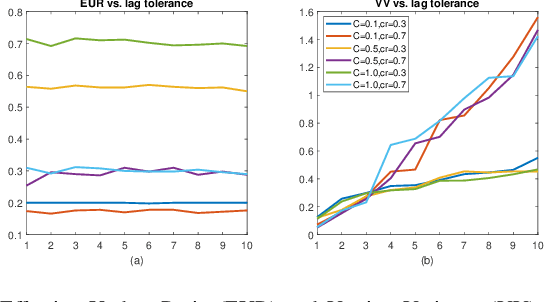

Federated learning (FL) has attracted increasing attention as a promising approach to driving a vast number of devices at the edge with artificial intelligence, i.e., Edge Intelligence. However, it is very challenging to guarantee the efficiency of FL considering the unreliable nature of edge devices while the cost of edge-server communication cannot be neglected. In this paper, we propose SAFA, a semi-asynchronous protocol that avoids problems in the pure synchronous/asynchronous approaches such as heavy downlink traffic and poor convergence rate in extreme conditions (e.g., clients dropping offline frequently). Key principles are introduced in model distribution, client selection and global aggregation, which are designed with tolerance to stragglers for efficiency boost and bias reduction. Extensive experiments on typical machine learning tasks show the effectiveness of the proposed protocol in shortening federated round duration, reducing local resource wastage, and improving the global model's accuracy at a low communication cost.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge