Robust Model Evaluation over Large-scale Federated Networks

Paper and Code

Oct 26, 2024

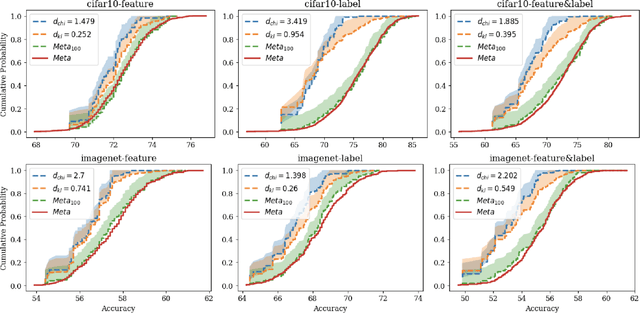

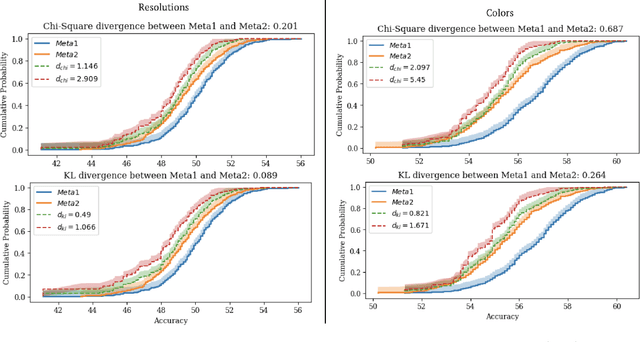

In this paper, we address the challenge of certifying the performance of a machine learning model on an unseen target network, using measurements from an available source network. We focus on a scenario where heterogeneous datasets are distributed across a source network of clients, all connected to a central server. Specifically, consider a source network "A" composed of $K$ clients, each holding private data from unique and heterogeneous distributions, which are assumed to be independent samples from a broader meta-distribution $\mu$. Our goal is to provide certified guarantees for the model's performance on a different, unseen target network "B," governed by another meta-distribution $\mu'$, assuming the deviation between $\mu$ and $\mu'$ is bounded by either the Wasserstein distance or an $f$-divergence. We derive theoretical guarantees for the model's empirical average loss and provide uniform bounds on the risk CDF, where the latter correspond to novel and adversarially robust versions of the Glivenko-Cantelli theorem and the Dvoretzky-Kiefer-Wolfowitz (DKW) inequality. Our bounds are computable in polynomial time with a polynomial number of queries to the $K$ clients, preserving client privacy by querying only the model's (potentially adversarial) loss on private data. We also establish non-asymptotic generalization bounds that consistently converge to zero as both $K$ and the minimum client sample size grow. Extensive empirical evaluations validate the robustness and practicality of our bounds across real-world tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge