Robust Ego and Object 6-DoF Motion Estimation and Tracking

Paper and Code

Jul 28, 2020

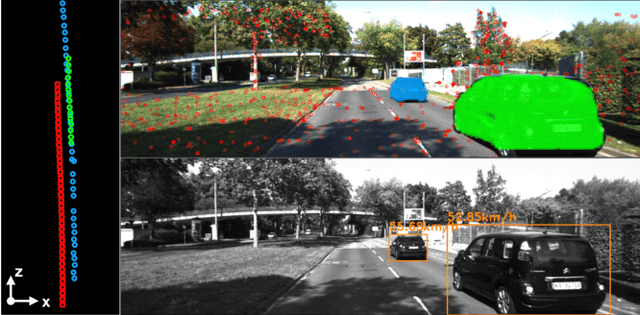

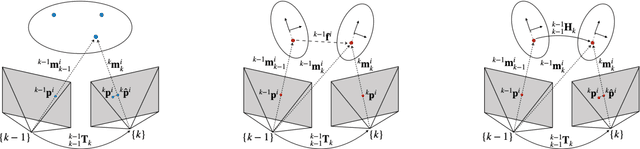

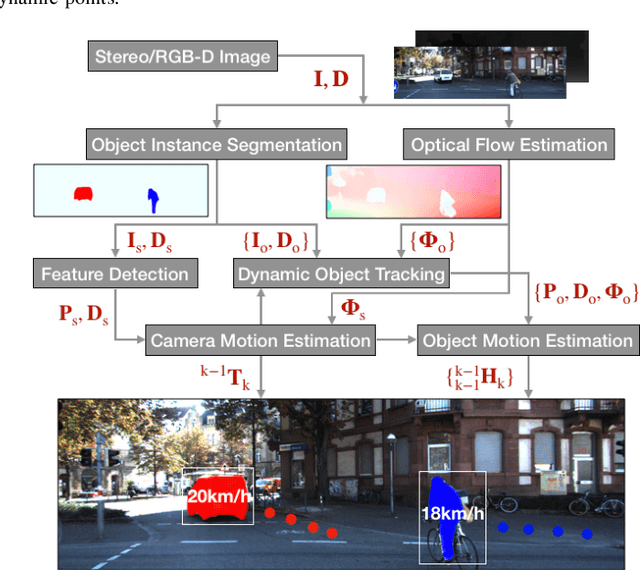

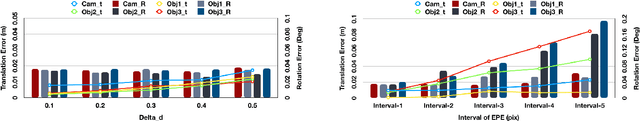

The problem of tracking self-motion as well as motion of objects in the scene using information from a camera is known as multi-body visual odometry and is a challenging task. This paper proposes a robust solution to achieve accurate estimation and consistent track-ability for dynamic multi-body visual odometry. A compact and effective framework is proposed leveraging recent advances in semantic instance-level segmentation and accurate optical flow estimation. A novel formulation, jointly optimizing SE(3) motion and optical flow is introduced that improves the quality of the tracked points and the motion estimation accuracy. The proposed approach is evaluated on the virtual KITTI Dataset and tested on the real KITTI Dataset, demonstrating its applicability to autonomous driving applications. For the benefit of the community, we make the source code public.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge