Robotic self-representation improves manipulation skills and transfer learning

Paper and Code

Nov 13, 2020

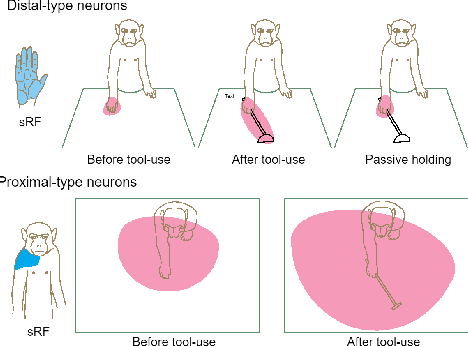

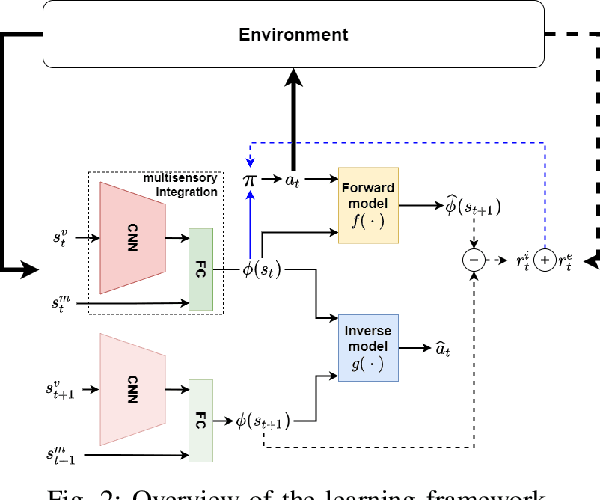

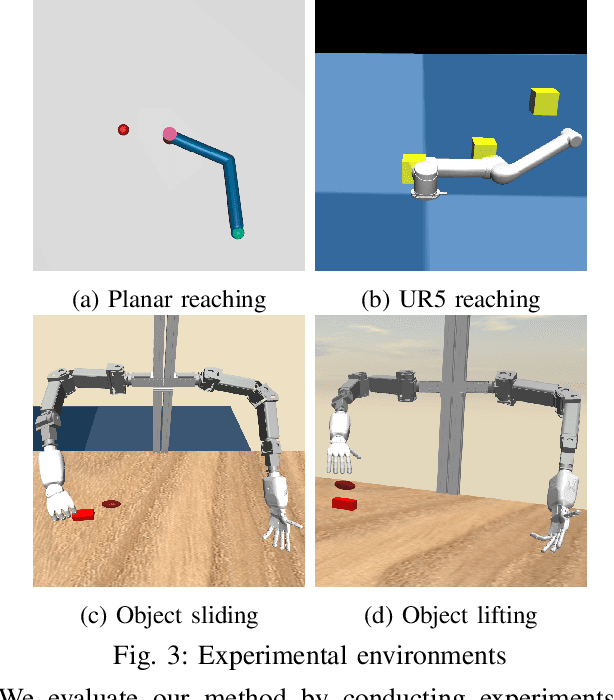

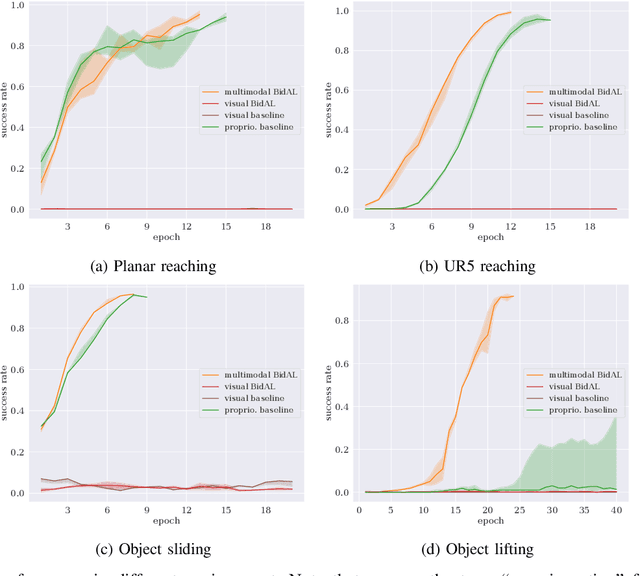

Cognitive science suggests that the self-representation is critical for learning and problem-solving. However, there is a lack of computational methods that relate this claim to cognitively plausible robots and reinforcement learning. In this paper, we bridge this gap by developing a model that learns bidirectional action-effect associations to encode the representations of body schema and the peripersonal space from multisensory information, which is named multimodal BidAL. Through three different robotic experiments, we demonstrate that this approach significantly stabilizes the learning-based problem-solving under noisy conditions and that it improves transfer learning of robotic manipulation skills.

* Submitted to IEEE Robotics and Automation Letters (RA-L) 2021 with

International Conference on Robotics and Automation Conference Option (ICRA)

2021

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge