Reservoir Memory Machines as Neural Computers

Paper and Code

Sep 14, 2020

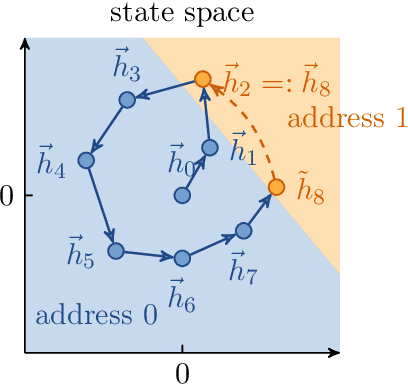

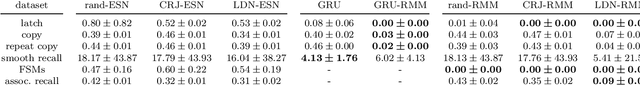

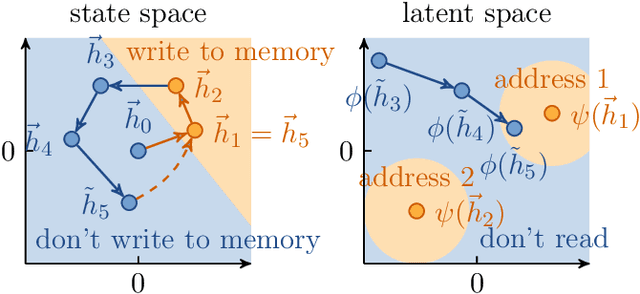

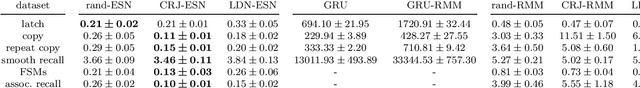

Differentiable neural computers extend artificial neural networks with an explicit memory without interference, thus enabling the model to perform classic computation tasks such as graph traversal. However, such models are difficult to train, requiring long training times and large datasets. In this work, we achieve some of the computational capabilities of differentiable neural computers with a model that can be trained extremely efficiently, namely an echo state network with an explicit memory without interference. This extension raises the computation power of echo state networks from strictly less than finite state machines to strictly more than finite state machines. Further, we demonstrate experimentally that our model performs comparably to its fully-trained deep version on several typical benchmark tasks for differentiable neural computers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge