Representation of Federated Learning via Worst-Case Robust Optimization Theory

Paper and Code

Dec 11, 2019

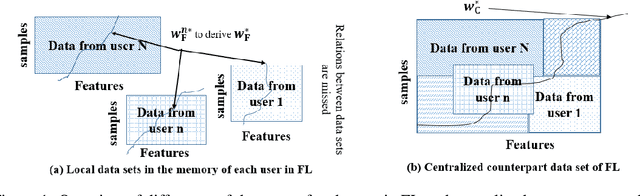

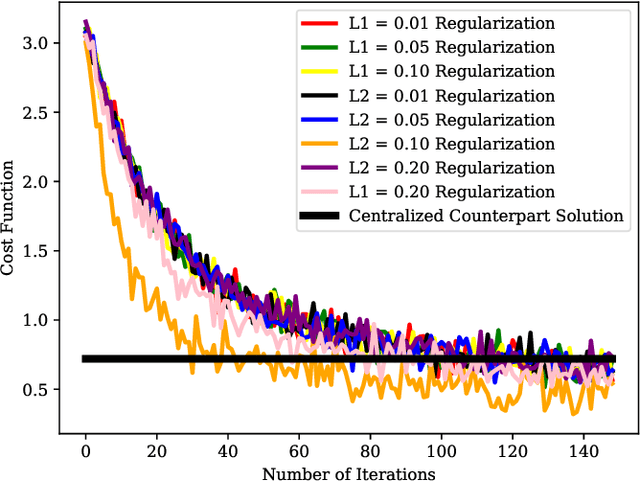

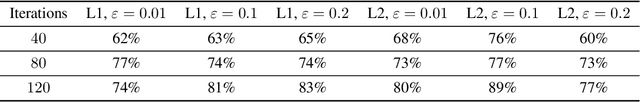

Federated learning (FL) is a distributed learning approach where a set of end-user devices participate in the learning process by acting on their isolated local data sets. Here, we process local data sets of users where worst-case optimization theory is used to reformulate the FL problem where the impact of local data sets in training phase is considered as an uncertain function bounded in a closed uncertainty region. This representation allows us to compare the performance of FL with its centralized counterpart, and to replace the uncertain function with a concept of protection functions leading to more tractable formulation. The latter supports applying a regularization factor in each user cost function in FL to reach a better performance. We evaluated our model using the MNIST data set versus the protection function parameters, e.g., regularization factors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge