Representation Learning for Visual-Relational Knowledge Graphs

Paper and Code

Mar 31, 2018

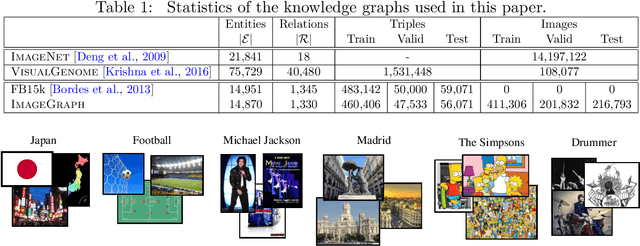

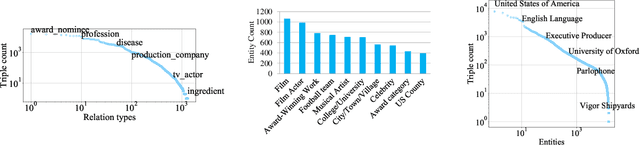

A visual-relational knowledge graph (KG) is a multi-relational graph whose entities are associated with images. We introduce ImageGraph, a KG with 1,330 relation types, 14,870 entities, and 829,931 images. Visual-relational KGs lead to novel probabilistic query types where images are treated as first-class citizens. Both the prediction of relations between unseen images and multi-relational image retrieval can be formulated as query types in a visual-relational KG. We approach the problem of answering such queries with a novel combination of deep convolutional networks and models for learning knowledge graph embeddings. The resulting models can answer queries such as "How are these two unseen images related to each other?" We also explore a zero-shot learning scenario where an image of an entirely new entity is linked with multiple relations to entities of an existing KG. The multi-relational grounding of unseen entity images into a knowledge graph serves as the description of such an entity. We conduct experiments to demonstrate that the proposed deep architectures in combination with KG embedding objectives can answer the visual-relational queries efficiently and accurately.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge