RelatIF: Identifying Explanatory Training Examples via Relative Influence

Paper and Code

Mar 25, 2020

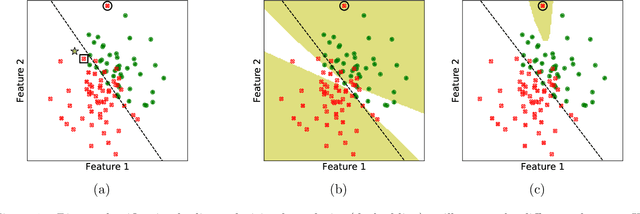

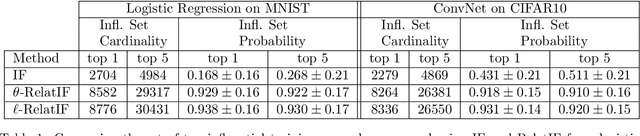

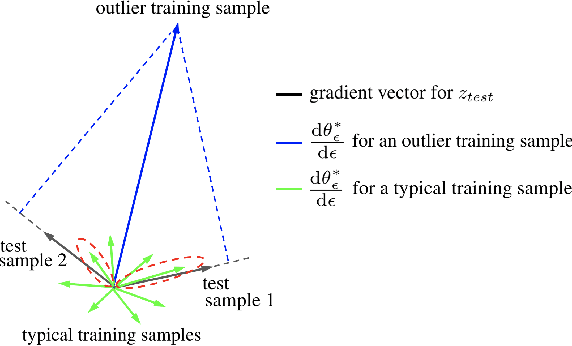

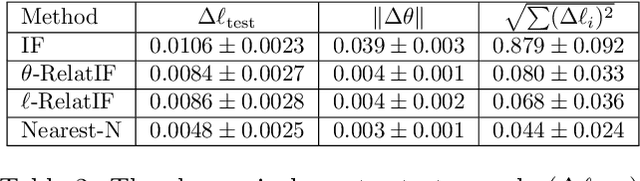

In this work, we focus on the use of influence functions to identify relevant training examples that one might hope "explain" the predictions of a machine learning model. One shortcoming of influence functions is that the training examples deemed most "influential" are often outliers or mislabelled, making them poor choices for explanation. In order to address this shortcoming, we separate the role of global versus local influence. We introduce RelatIF, a new class of criteria for choosing relevant training examples by way of an optimization objective that places a constraint on global influence. RelatIF considers the local influence that an explanatory example has on a prediction relative to its global effects on the model. In empirical evaluations, we find that the examples returned by RelatIF are more intuitive when compared to those found using influence functions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge