Reinforced Multi-teacher Knowledge Distillation for Efficient General Image Forgery Detection and Localization

Paper and Code

Apr 07, 2025

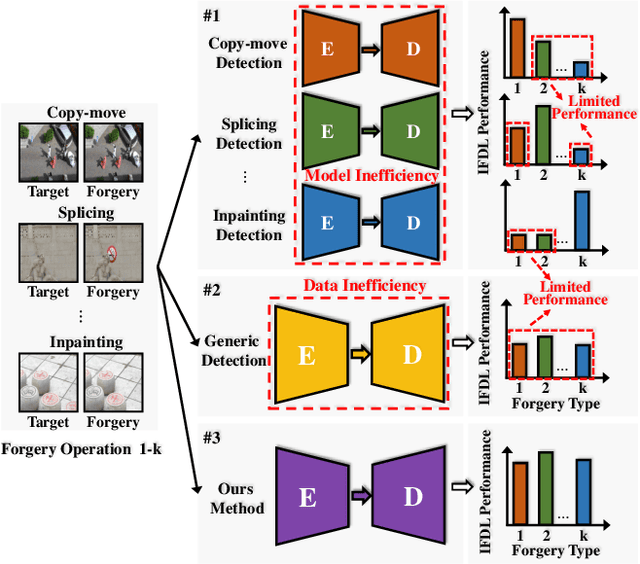

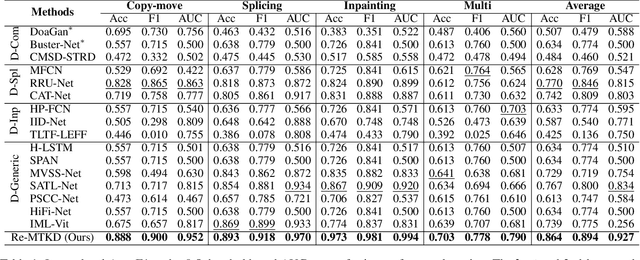

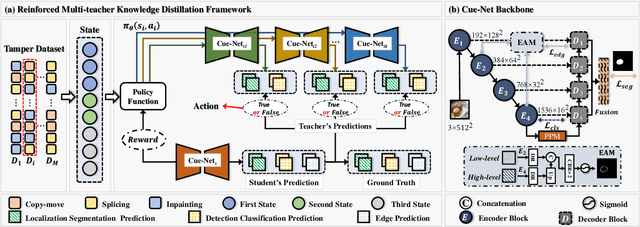

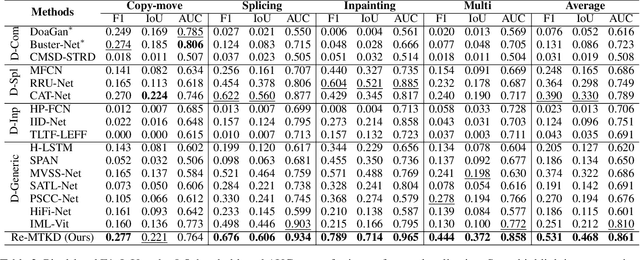

Image forgery detection and localization (IFDL) is of vital importance as forged images can spread misinformation that poses potential threats to our daily lives. However, previous methods still struggled to effectively handle forged images processed with diverse forgery operations in real-world scenarios. In this paper, we propose a novel Reinforced Multi-teacher Knowledge Distillation (Re-MTKD) framework for the IFDL task, structured around an encoder-decoder \textbf{C}onvNeXt-\textbf{U}perNet along with \textbf{E}dge-Aware Module, named Cue-Net. First, three Cue-Net models are separately trained for the three main types of image forgeries, i.e., copy-move, splicing, and inpainting, which then serve as the multi-teacher models to train the target student model with Cue-Net through self-knowledge distillation. A Reinforced Dynamic Teacher Selection (Re-DTS) strategy is developed to dynamically assign weights to the involved teacher models, which facilitates specific knowledge transfer and enables the student model to effectively learn both the common and specific natures of diverse tampering traces. Extensive experiments demonstrate that, compared with other state-of-the-art methods, the proposed method achieves superior performance on several recently emerged datasets comprised of various kinds of image forgeries.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge