Regret Bounds for Noise-Free Bayesian Optimization

Paper and Code

Feb 12, 2020

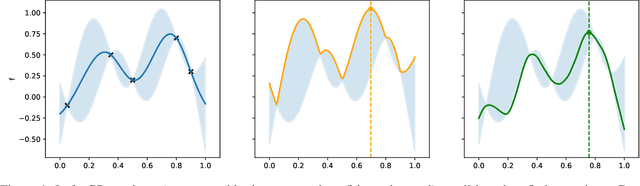

Bayesian optimisation is a powerful method for non-convex black-box optimization in low data regimes. However, the question of establishing tight upper bounds for common algorithms in the noiseless setting remains a largely open question. In this paper, we establish new and tightest bounds for two algorithms, namely GP-UCB and Thompson sampling, under the assumption that the objective function is smooth in terms of having a bounded norm in a Mat\'ern RKHS. Importantly, unlike several related works, we do not consider perfect knowledge of the kernel of the Gaussian process emulator used within the Bayesian optimization loop. This allows us to provide results for practical algorithms that sequentially estimate the Gaussian process kernel parameters from the available data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge