Registering Neural Radiance Fields as 3D Density Images

Paper and Code

May 22, 2023

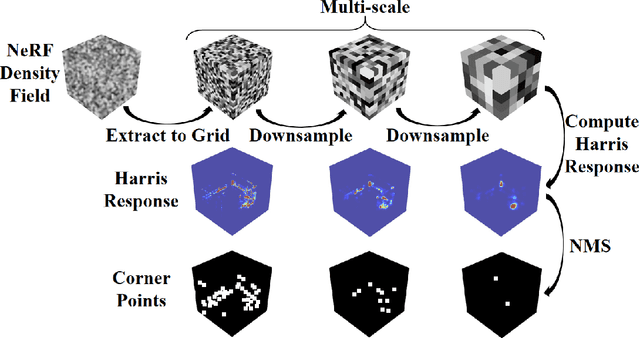

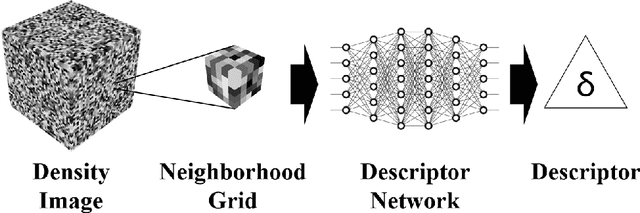

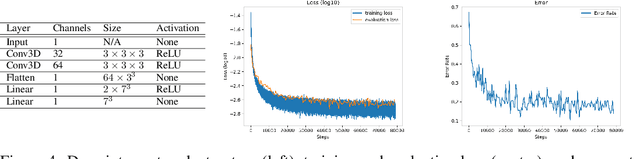

No significant work has been done to directly merge two partially overlapping scenes using NeRF representations. Given pre-trained NeRF models of a 3D scene with partial overlapping, this paper aligns them with a rigid transform, by generalizing the traditional registration pipeline, that is, key point detection and point set registration, to operate on 3D density fields. To describe corner points as key points in 3D, we propose to use universal pre-trained descriptor-generating neural networks that can be trained and tested on different scenes. We perform experiments to demonstrate that the descriptor networks can be conveniently trained using a contrastive learning strategy. We demonstrate that our method, as a global approach, can effectively register NeRF models, thus making possible future large-scale NeRF construction by registering its smaller and overlapping NeRFs captured individually.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge