Recurrent Neural Network for Learning DenseDepth and Ego-Motion from Video

Paper and Code

May 17, 2018

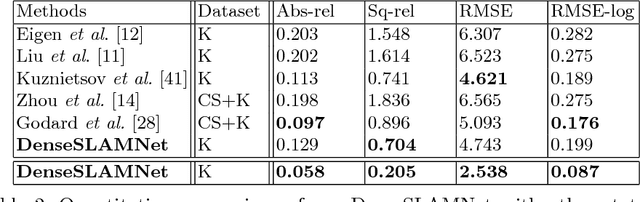

Learning-based, single-view depth estimation often generalizes poorly to unseen datasets. While learning-based, two-frame depth estimation solves this problem to some extent by learning to match features across frames, it performs poorly at large depth where the uncertainty is high. There exists few learning-based, multi-view depth estimation methods. In this paper, we present a learning-based, multi-view dense depth map and ego-motion estimation method that uses Recurrent Neural Networks (RNN). Our model is designed for 3D reconstruction from video where the input frames are temporally correlated. It is generalizable to single- or two-view dense depth estimation. Compared to recent single- or two-view CNN-based depth estimation methods, our model leverages more views and achieves more accurate results, especially at large distances. Our method produces superior results to the state-of-the-art learning-based, single- or two-view depth estimation methods on both indoor and outdoor benchmark datasets. We also demonstrate that our method can even work on extremely difficult sequences, such as endoscopic video, where none of the assumptions (static scene, constant lighting, Lambertian reflection, etc.) from traditional 3D reconstruction methods hold.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge