Reconstruction Bottlenecks in Object-Centric Generative Models

Paper and Code

Jul 13, 2020

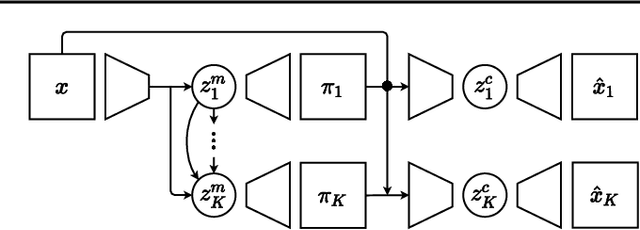

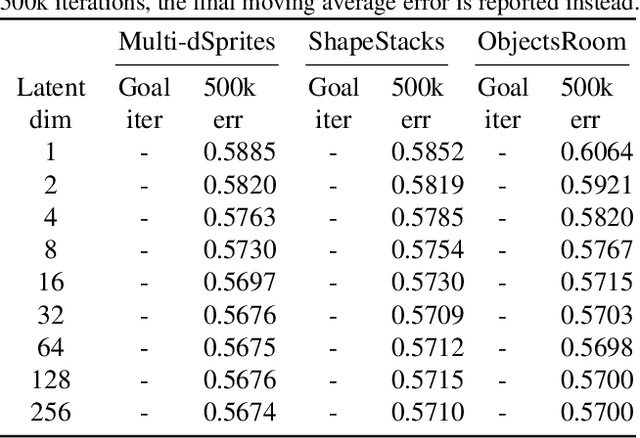

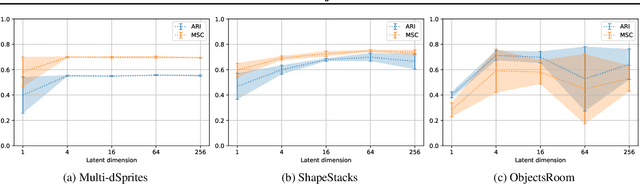

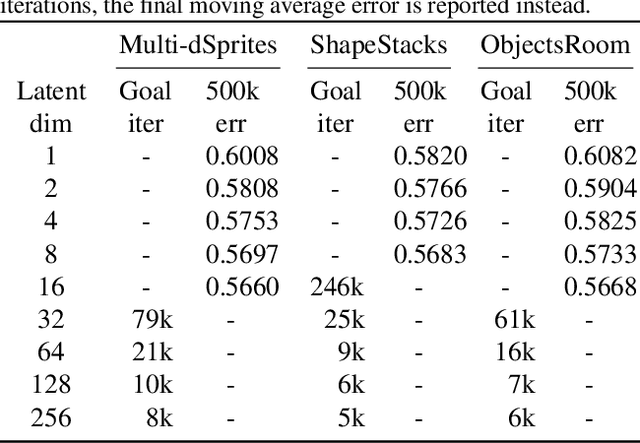

A range of methods with suitable inductive biases exist to learn interpretable object-centric representations of images without supervision. However, these are largely restricted to visually simple images; robust object discovery in real-world sensory datasets remains elusive. To increase the understanding of such inductive biases, we empirically investigate the role of "reconstruction bottlenecks" for scene decomposition in GENESIS, a recent VAE-based model. We show such bottlenecks determine reconstruction and segmentation quality and critically influence model behaviour.

* 10 pages, 7 Figures, Workshop on Object-Oriented Learning at ICML

2020

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge