Reconstructing ERP Signals Using Generative Adversarial Networks for Mobile Brain-Machine Interface

Paper and Code

May 18, 2020

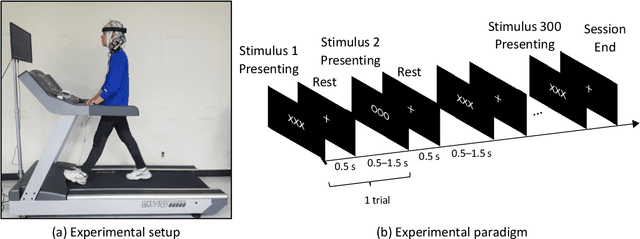

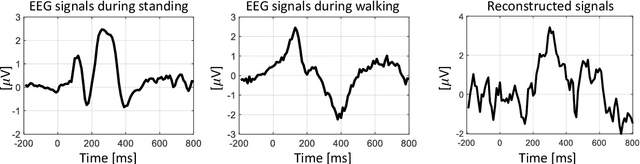

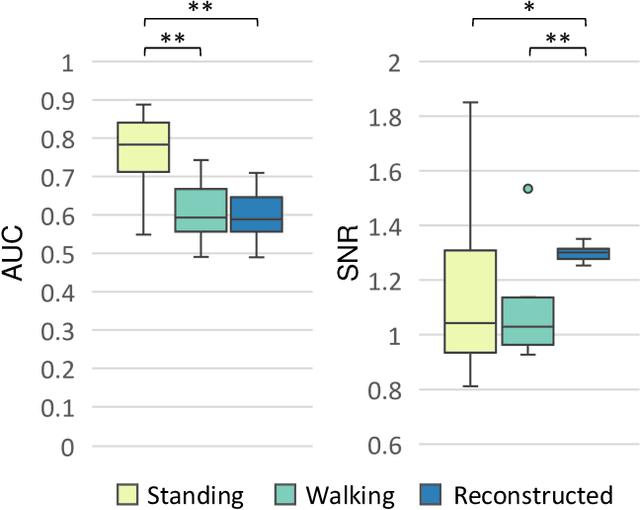

Practical brain-machine interfaces have been widely studied to accurately detect human intentions using brain signals in the real world. However, the electroencephalography (EEG) signals are distorted owing to the artifacts such as walking and head movement, so brain signals may be large in amplitude rather than desired EEG signals. Due to these artifacts, detecting accurately human intention in the mobile environment is challenging. In this paper, we proposed the reconstruction framework based on generative adversarial networks using the event-related potentials (ERP) during walking. We used a pre-trained convolutional encoder to represent latent variables and reconstructed ERP through the generative model which shape similar to the opposite of encoder. Finally, the ERP was classified using the discriminative model to demonstrate the validity of our proposed framework. As a result, the reconstructed signals had important components such as N200 and P300 similar to ERP during standing. The accuracy of reconstructed EEG was similar to raw noisy EEG signals during walking. The signal-to-noise ratio of reconstructed EEG was significantly increased as 1.3. The loss of the generative model was 0.6301, which is comparatively low, which means training generative model had high performance. The reconstructed ERP consequentially showed an improvement in classification performance during walking through the effects of noise reduction. The proposed framework could help recognize human intention based on the brain-machine interface even in the mobile environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge