Real-time Trajectory-based Social Group Detection

Paper and Code

Apr 12, 2023

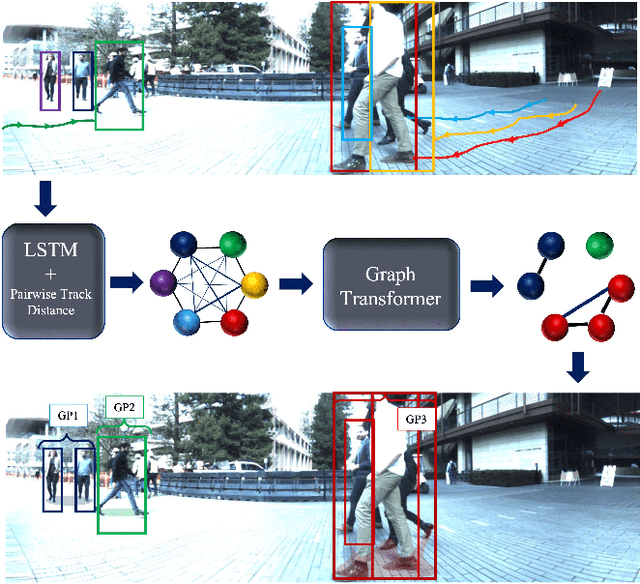

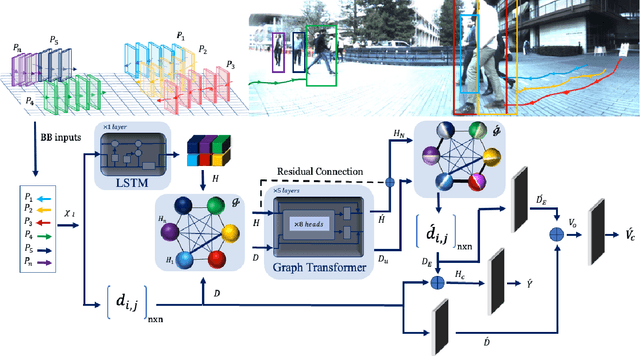

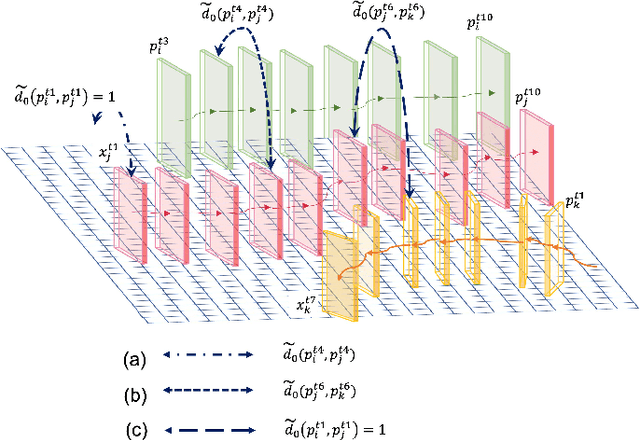

Social group detection is a crucial aspect of various robotic applications, including robot navigation and human-robot interactions. To date, a range of model-based techniques have been employed to address this challenge, such as the F-formation and trajectory similarity frameworks. However, these approaches often fail to provide reliable results in crowded and dynamic scenarios. Recent advancements in this area have mainly focused on learning-based methods, such as deep neural networks that use visual content or human pose. Although visual content-based methods have demonstrated promising performance on large-scale datasets, their computational complexity poses a significant barrier to their practical use in real-time applications. To address these issues, we propose a simple and efficient framework for social group detection. Our approach explores the impact of motion trajectory on social grouping and utilizes a novel, reliable, and fast data-driven method. We formulate the individuals in a scene as a graph, where the nodes are represented by LSTM-encoded trajectories and the edges are defined by the distances between each pair of tracks. Our framework employs a modified graph transformer module and graph clustering losses to detect social groups. Our experiments on the popular JRDBAct dataset reveal noticeable improvements in performance, with relative improvements ranging from 2% to 11%. Furthermore, our framework is significantly faster, with up to 12x faster inference times compared to state-of-the-art methods under the same computation resources. These results demonstrate that our proposed method is suitable for real-time robotic applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge