Real-Time Model Calibration with Deep Reinforcement Learning

Paper and Code

Jun 09, 2020

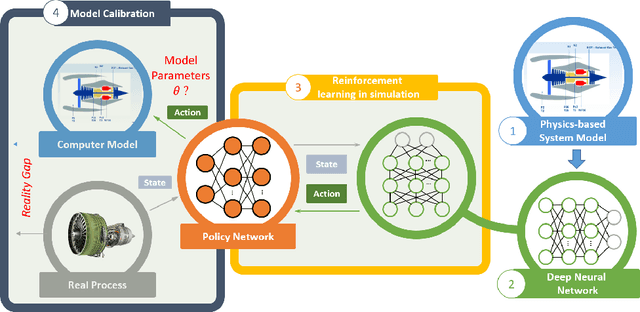

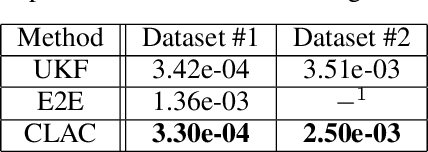

The dynamic, real-time, and accurate inference of model parameters from empirical data is of great importance in many scientific and engineering disciplines that use computational models (such as a digital twin) for the analysis and prediction of complex physical processes. However, fast and accurate inference for processes with large and high dimensional datasets cannot easily be achieved with state-of-the-art methods under noisy real-world conditions. The primary reason is that the inference of model parameters with traditional techniques based on optimisation or sampling often suffers from computational and statistical challenges, resulting in a trade-off between accuracy and deployment time. In this paper, we propose a novel framework for inference of model parameters based on reinforcement learning. The contribution of the paper is twofold: 1) We reformulate the inference problem as a tracking problem with the objective of learning a policy that forces the response of the physics-based model to follow the observations; 2) We propose the constrained Lyapunov-based actor-critic (CLAC) algorithm to enable the robust and accurate inference of physics-based model parameters in real time under noisy real-world conditions. The proposed methodology is demonstrated and evaluated on two model-based diagnostics test cases utilizing two different physics-based models of turbofan engines. The performance of the methodology is compared to that of two alternative approaches: a state update method (unscented Kalman filter) and a supervised end-to-end mapping with deep neural networks. The experimental results demonstrate that the proposed methodology outperforms all other tested methods in terms of speed and robustness, with high inference accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge