Real-Time Limited-View CT Inpainting and Reconstruction with Dual Domain Based on Spatial Information

Paper and Code

Jan 19, 2021

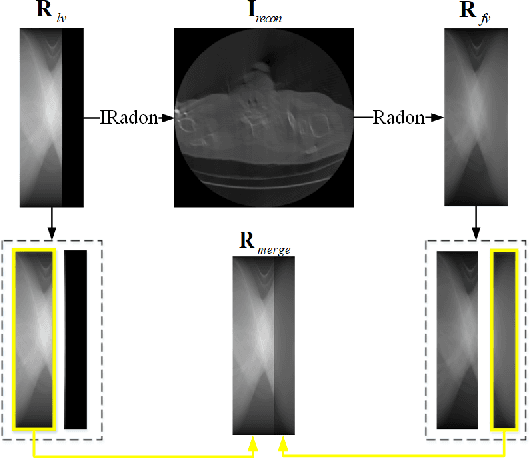

Low-dose Computed Tomography is a common issue in reality. Current reduction, sparse sampling and limited-view scanning can all cause it. Between them, limited-view CT is general in the industry due to inevitable mechanical and physical limitation. However, limited-view CT can cause serious imaging problem on account of its massive information loss. Thus, we should effectively utilize the scant prior information to perform completion. It is an undeniable fact that CT imaging slices are extremely dense, which leads to high continuity between successive images. We realized that fully exploit the spatial correlation between consecutive frames can significantly improve restoration results in video inpainting. Inspired by this, we propose a deep learning-based three-stage algorithm that hoist limited-view CT imaging quality based on spatial information. In stage one, to better utilize prior information in the Radon domain, we design an adversarial autoencoder to complement the Radon data. In the second stage, a model is built to perform inpainting based on spatial continuity in the image domain. At this point, we have roughly restored the imaging, while its texture still needs to be finely repaired. Hence, we propose a model to accurately restore the image in stage three, and finally achieve an ideal inpainting result. In addition, we adopt FBP instead of SART-TV to make our algorithm more suitable for real-time use. In the experiment, we restore and reconstruct the Radon data that has been cut the rear one-third part, they achieve PSNR of 40.209, SSIM of 0.943, while precisely present the texture.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge