REAL ML: Recognizing, Exploring, and Articulating Limitations of Machine Learning Research

Paper and Code

May 05, 2022

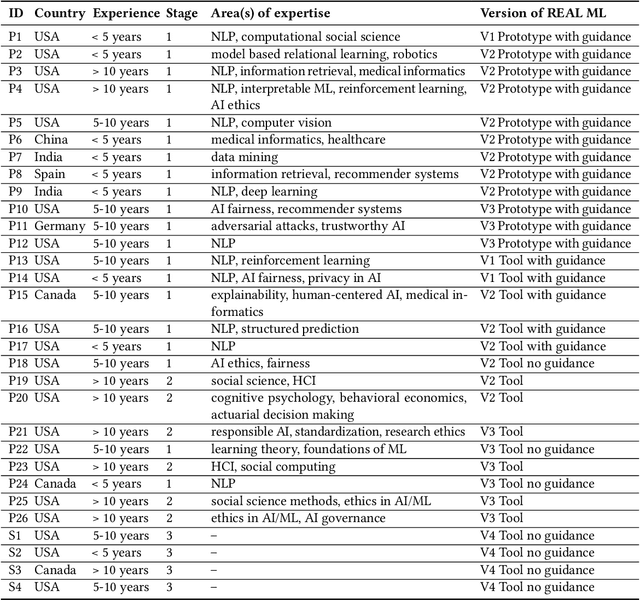

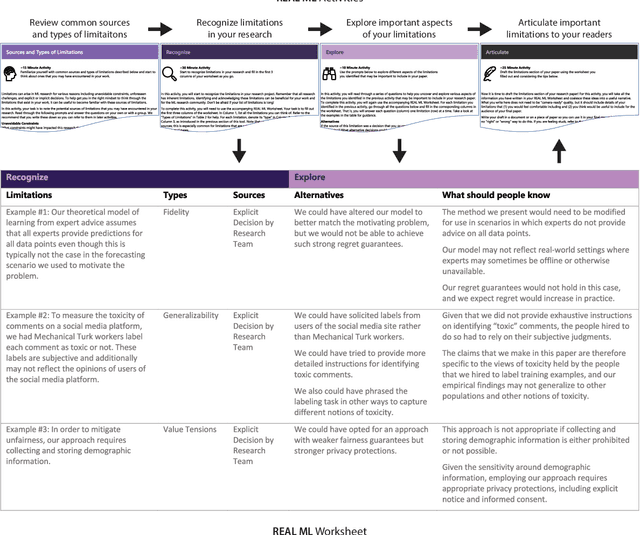

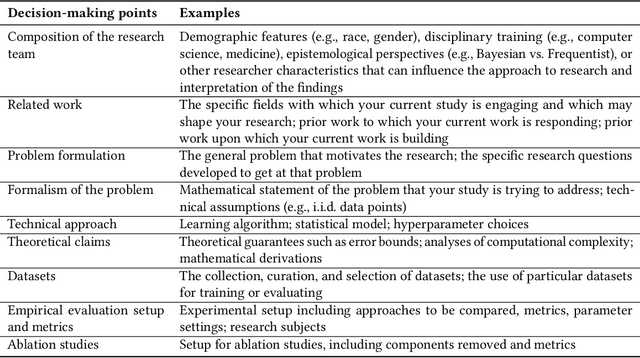

Transparency around limitations can improve the scientific rigor of research, help ensure appropriate interpretation of research findings, and make research claims more credible. Despite these benefits, the machine learning (ML) research community lacks well-developed norms around disclosing and discussing limitations. To address this gap, we conduct an iterative design process with 30 ML and ML-adjacent researchers to develop and test REAL ML, a set of guided activities to help ML researchers recognize, explore, and articulate the limitations of their research. Using a three-stage interview and survey study, we identify ML researchers' perceptions of limitations, as well as the challenges they face when recognizing, exploring, and articulating limitations. We develop REAL ML to address some of these practical challenges, and highlight additional cultural challenges that will require broader shifts in community norms to address. We hope our study and REAL ML help move the ML research community toward more active and appropriate engagement with limitations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge