RBoard: A Unified Platform for Reproducible and Reusable Recommender System Benchmarks

Paper and Code

Sep 10, 2024

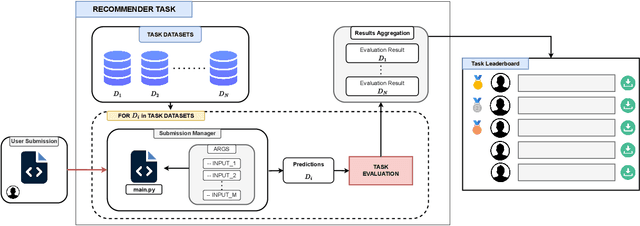

Recommender systems research lacks standardized benchmarks for reproducibility and algorithm comparisons. We introduce RBoard, a novel framework addressing these challenges by providing a comprehensive platform for benchmarking diverse recommendation tasks, including CTR prediction, Top-N recommendation, and others. RBoard's primary objective is to enable fully reproducible and reusable experiments across these scenarios. The framework evaluates algorithms across multiple datasets within each task, aggregating results for a holistic performance assessment. It implements standardized evaluation protocols, ensuring consistency and comparability. To facilitate reproducibility, all user-provided code can be easily downloaded and executed, allowing researchers to reliably replicate studies and build upon previous work. By offering a unified platform for rigorous, reproducible evaluation across various recommendation scenarios, RBoard aims to accelerate progress in the field and establish a new standard for recommender systems benchmarking in both academia and industry. The platform is available at https://rboard.org and the demo video can be found at https://bit.ly/rboard-demo.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge