Random Machines Regression Approach: an ensemble support vector regression model with free kernel choice

Paper and Code

Mar 27, 2020

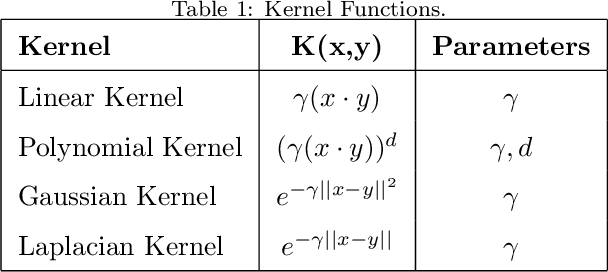

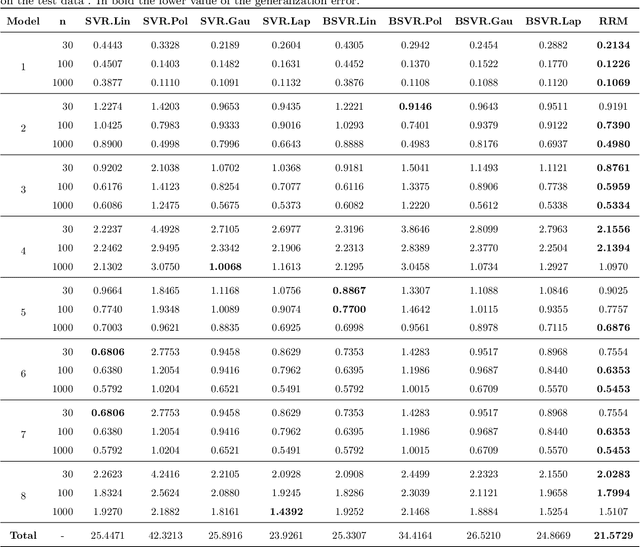

Machine learning techniques always aim to reduce the generalized prediction error. In order to reduce it, ensemble methods present a good approach combining several models that results in a greater forecasting capacity. The Random Machines already have been demonstrated as strong technique, i.e: high predictive power, to classification tasks, in this article we propose an procedure to use the bagged-weighted support vector model to regression problems. Simulation studies were realized over artificial datasets, and over real data benchmarks. The results exhibited a good performance of Regression Random Machines through lower generalization error without needing to choose the best kernel function during tuning process.

* arXiv admin note: text overlap with arXiv:1911.09411

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge