RACE: Reinforced Cooperative Autonomous Vehicle Collision AvoidancE

Paper and Code

Apr 02, 2020

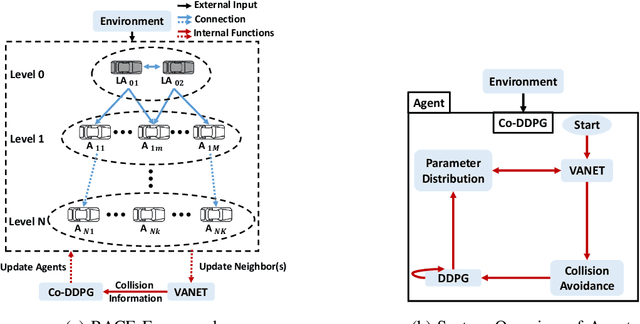

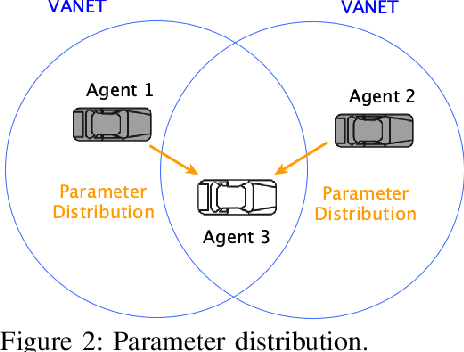

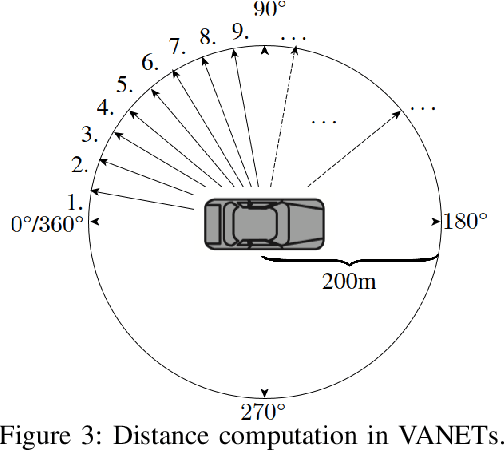

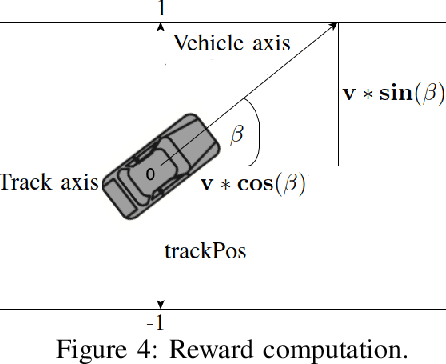

With the rapid development of autonomous driving, collision avoidance has attracted attention from both academia and industry. Many collision avoidance strategies have emerged in recent years, but the dynamic and complex nature of driving environment poses a challenge to develop robust collision avoidance algorithms. Therefore, in this paper, we propose a decentralized framework named RACE: Reinforced Cooperative Autonomous Vehicle Collision AvoidancE. Leveraging a hierarchical architecture we develop an algorithm named Co-DDPG to efficiently train autonomous vehicles. Through a security abiding channel, the autonomous vehicles distribute their driving policies. We use the relative distances obtained by the opponent sensors to build the VANET instead of locations, which ensures the vehicle's location privacy. With a leader-follower architecture and parameter distribution, RACE accelerates the learning of optimal policies and efficiently utilizes the remaining resources. We implement the RACE framework in the widely used TORCS simulator and conduct various experiments to measure the performance of RACE. Evaluations show that RACE quickly learns optimal driving policies and effectively avoids collisions. Moreover, RACE also scales smoothly with varying number of participating vehicles. We further compared RACE with existing autonomous driving systems and show that RACE outperforms them by experiencing 65% less collisions in the training process and exhibits improved performance under varying vehicle density.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge