PSF-LO: Parameterized Semantic Features Based Lidar Odometry

Paper and Code

Oct 31, 2020

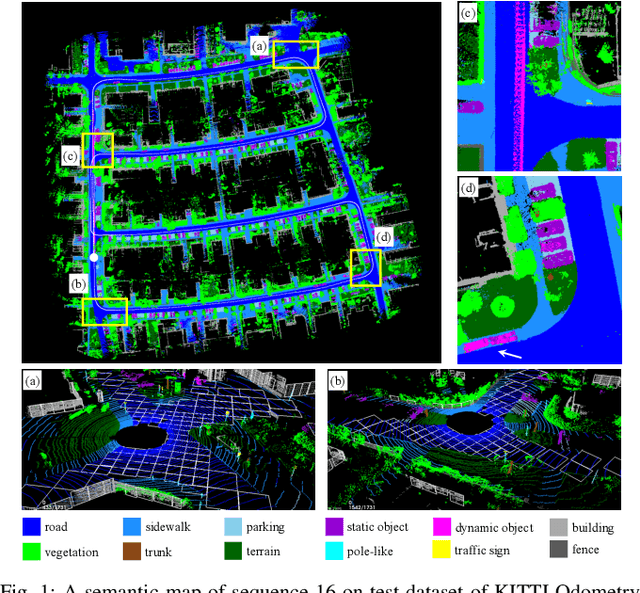

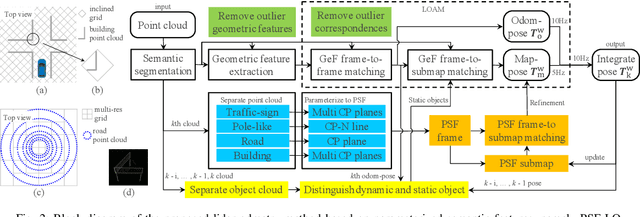

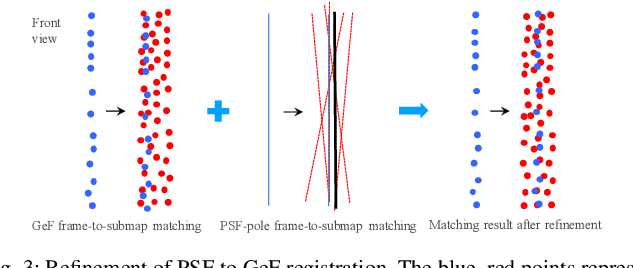

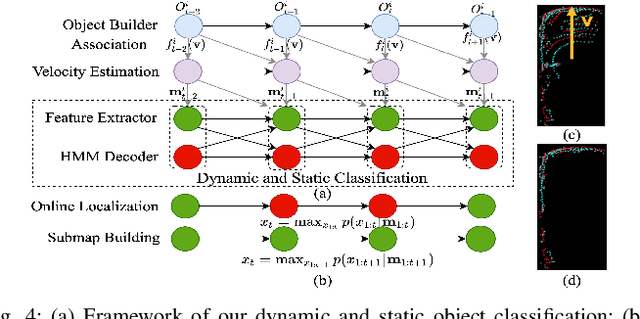

Lidar odometry (LO) is a key technology in numerous reliable and accurate localization and mapping systems of autonomous driving. The state-of-the-art LO methods generally leverage geometric information to perform point cloud registration. Furthermore, obtaining point cloud semantic information which can describe the environment more abundantly will help for the registration. We present a novel semantic lidar odometry method based on self-designed parameterized semantic features (PSFs) to achieve low-drift ego-motion estimation for autonomous vehicle in realtime. We first use a convolutional neural network-based algorithm to obtain point-wise semantics from the input laser point cloud, and then use semantic labels to separate the road, building, traffic sign and pole-like point cloud and fit them separately to obtain corresponding PSFs. A fast PSF-based matching enable us to refine geometric features (GeFs) registration, reducing the impact of blurred submap surface on the accuracy of GeFs matching. Besides, we design an efficient method to accurately recognize and remove the dynamic objects while retaining static ones in the semantic point cloud, which are beneficial to further improve the accuracy of LO. We evaluated our method, namely PSF-LO, on the public dataset KITTI Odometry Benchmark and ranked #1 among semantic lidar methods with an average translation error of 0.82% in the test dataset at the time of writing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge