Prompting Language-Informed Distribution for Compositional Zero-Shot Learning

Paper and Code

May 23, 2023

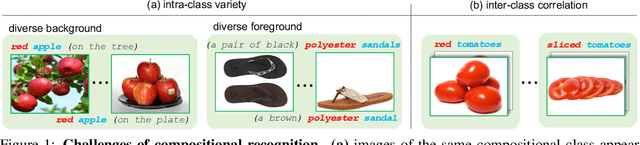

The compositional zero-shot learning (CZSL) task aims to recognize unseen compositional visual concepts (i.e., sliced tomatoes), where the models are learned only from the seen compositions (i.e., sliced potatoes and red tomatoes). Thanks to the prompt tuning on large pre-trained visual language models such as CLIP, recent literature shows impressively better CZSL performance than traditional vision-based methods. However, the key aspects that impact the generalization to unseen compositions, including the diversity and informativeness of class context, and the entanglement between visual primitives (i.e., states and objects), are not properly addressed in existing CLIP-based CZSL literature. In this paper, we propose a model by prompting the language-informed distribution, aka., PLID, for the CZSL task. Specifically, the PLID leverages pre-trained large language models (LLM) to 1) formulate the language-informed class distribution, and 2) enhance the compositionality of the softly prompted class embedding. Moreover, a stochastic logit mixup strategy is proposed to dynamically fuse the decisions from the predictions in the compositional and the primitive logit space. Orthogonal to the existing literature of soft, hard, or distributional prompts, our method advocates prompting the LLM-supported class distribution that leads to a better compositional zero-shot generalization. Experimental results on MIT-States, UT-Zappos, and C-GQA datasets show the superior performance of the PLID to the prior arts. The code and models will be publicly released.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge